.avif)

Welcome to our blog.

2026 State of AI in Security & Development

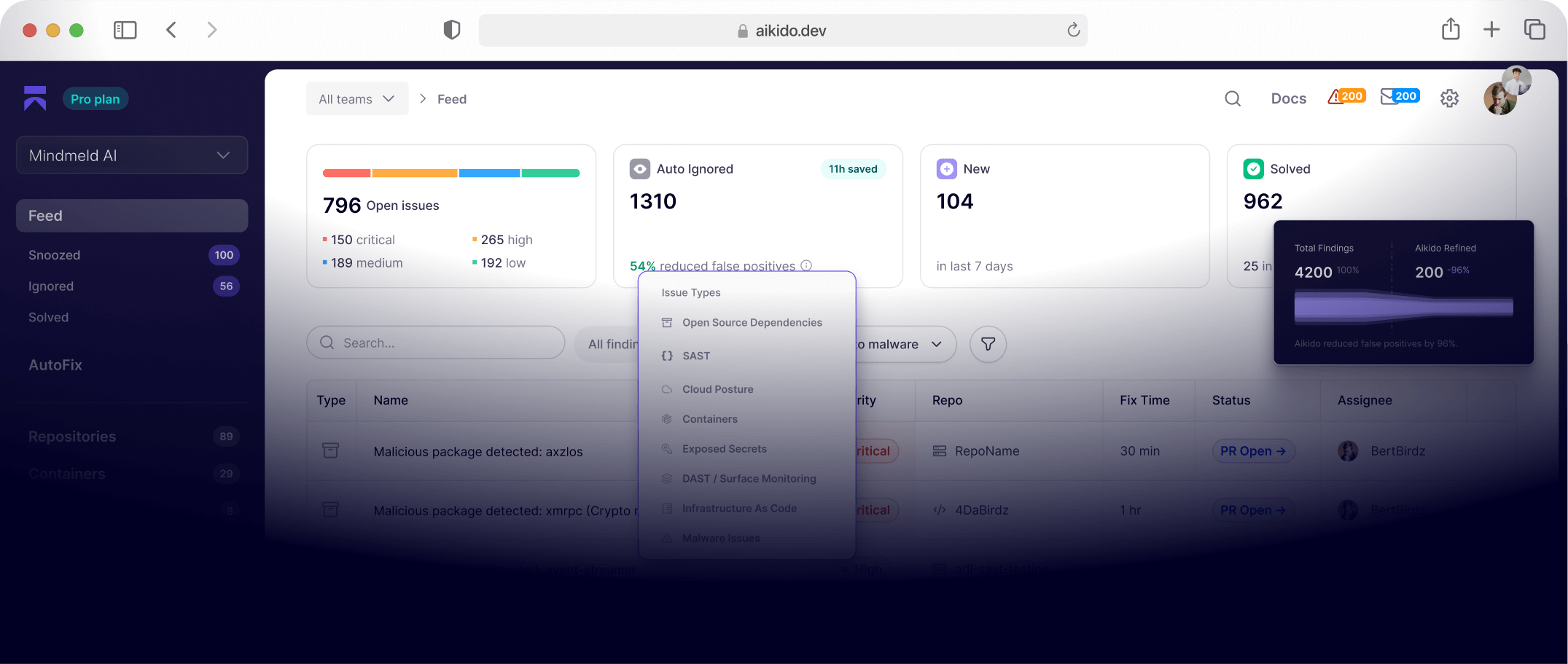

Our new report captures the voices of 450 security leaders (CISOs or equivalent), developers, and AppSec engineers across Europe and the US. Together, they reveal how AI-generated code is already breaking things, how tool sprawl is making security worse, and how developer experience is directly tied to incident rates. This is where speed and safety collide in 2025.

Vulnerabilities & Threats

Cut through the noise with real-world CVE breakdowns, malware analysis, exploits, and emerging risks.

Customer Stories

See how teams like yours are using Aikido to simplify security and ship with confidence.

Axios CVE-2026-40175: a critical bug that’s… not exploitable

Axios CVE-2026-40175 is rated critical, but in real Node.js environments it’s not practically exploitable. Here’s why.

Bug bounty isn’t dead, but the old model is breaking

Bug bounty is hitting a breaking point as AI overwhelms programs, pushing a shift toward more sustainable, quality-focused security models.

Aikido Attack finds multiple 0-days in Hoppscotch

Aikido Attack identified three high-severity vulnerabilities in Hoppscotch: an open redirect leading to account takeover, stored XSS, and a broken access control issue allowing cross-team request injection.

fast-draft Open VSX Extension Compromised by BlokTrooper

A popular Open VSX extension was compromised and used to deploy a RAT and infostealer from attacker-controlled infrastructure. Its version history tells the real story, with malicious releases appearing between clean ones.

Glassworm Strikes Popular React Native Phone Number Packages

Two popular React Native npm packages were backdoored by suspected Glassworm actors and used to deliver multi-stage malware. Here's what the malware does and what to look for.

How Security Teams Fight Back Against AI-Powered Hackers

A single hacker and a Claude subscription just took down nine Mexican government agencies. AI has handed attackers a serious power upgrade. Security teams need a new playbook.

How does AI pentesting work with compliance?

AI pentesting is being accepted for SOC 2, ISO 27001, HIPAA, and PCI DSS. Here's what auditors actually look for, and where the real limitations are.

Persistent XSS/RCE using WebSockets in Storybook’s dev server

Aikido Attack found a WebSocket hijacking vulnerability in Storybook's dev server that can lead to persistent XSS, remote code execution, and, in the worst case, supply chain compromise. We walk through how an attacker can exploit this without any user interaction at all, and a developer just has to visit the wrong website while to run into this attack.

Why Determinism Is Still a Necessity in Security

AI-powered security tools are getting better at finding vulnerabilities. But deterministic tools give you the consistency that pipelines, compliance, and audit trails depend on. We look at what deterministic scanning does well, where AI takes over, and how the two work together for effective security.

What is Slopsquatting? The AI Package Hallucination Attack Already Happening

AI models hallucinate package names — and attackers are registering them before anyone notices. Slopsquatting is the AI-era evolution of typosquatting, and unlike its predecessor, npm's existing protections don't work. We look at the real-world research showing it's already happening, from confirmed malicious packages still pulling hundreds of weekly downloads to a hallucinated package name that spread to 237 repositories through AI agent skill files.

Aikido × Lovable: Vibe, Fix, Ship

Lovable and Aikido bring pentesting into the platform, allowing builders to simulate real-world attacks and fix issues before shipping.

GlassWorm goes native: New Zig dropper infects every IDE on your machine

GlassWorm deploys a Zig-based native dropper hidden within a fake extension, silently compromising VS Code, Cursor, VSCodium, and other IDEs.

Top 12 Dynamic Application Security Testing (DAST) Tools in 2026

Discover the 12 top best Dynamic Application Security Testing (DAST) tools in 2026. Compare features, pros, cons, and integrations to choose the right DAST solution for your DevSecOps pipeline.

Security testing is validating software that no longer exists

Modern teams ship faster than pentesting can keep up. Explore the growing speed gap in security testing—and why traditional approaches are falling behind.

Get secure now

Secure your code, cloud, and runtime in one central system.

Find and fix vulnerabilities fast automatically.

.png)