Bug bounty has been a very hot topic lately.

We’re seeing high-profile programs go offline or fundamentally change: the IBB (one of the most important programs for open-source programs) is pausing submissions, curl is removing payouts and Node.js is removing its bounty entirely. That’s not noise, that's signal.

I wanted to understand where bug bounty is actually heading, so I sat down with two of the most credible voices on opposite sides of this conversation:

- Daniel Stenberg, creator of curl, who is living the maintainer reality and recently halted bug bounty payments

- Casey Ellis, the founder of Bugcrowd, one of the people who helped establish the model.

What I found was that the bug bounty model is at a crossroads, and we’re in the midst of a big shift.

Why bug bounty worked so well

Before we get into where the model is headed, let’s take a step back and understand why it’s been one of the most effective ideas in security over the last decade.

It all stems from the idea of letting the internet try to break your stuff before attackers do. And it worked because it gave companies scale they could never hire for.

As Casey put it:

“If you’re trying to outsmart a global pool of attackers with someone working 9 to 5, the math is wrong.”

That’s the magic of bug bounty. Instead of relying on a handful of internal people, you tap into a global pool of different skill sets, perspectives, and motivations - all attacking your system in ways your internal team simply didn’t think of. And that’s without the significant overheads required to hire specialist experts internally.

That’s why it became fundamental to modern security programs.

What’s breaking now

What’s changing isn’t the demand for security, it’s the economics of how bug bounty operates.

AI has altered the balance, and not in a good way. Finding bugs is now cheaper than ever, writing reports is even easier, and submitting them has effectively become frictionless. Meanwhile, the cost of validating those reports and actually fixing the issues hasn’t changed at all.

- Finding bugs → cheap

- Writing reports → cheaper

- Submitting reports → basically free

- Validating them → still expensive

- Fixing them → very expensive

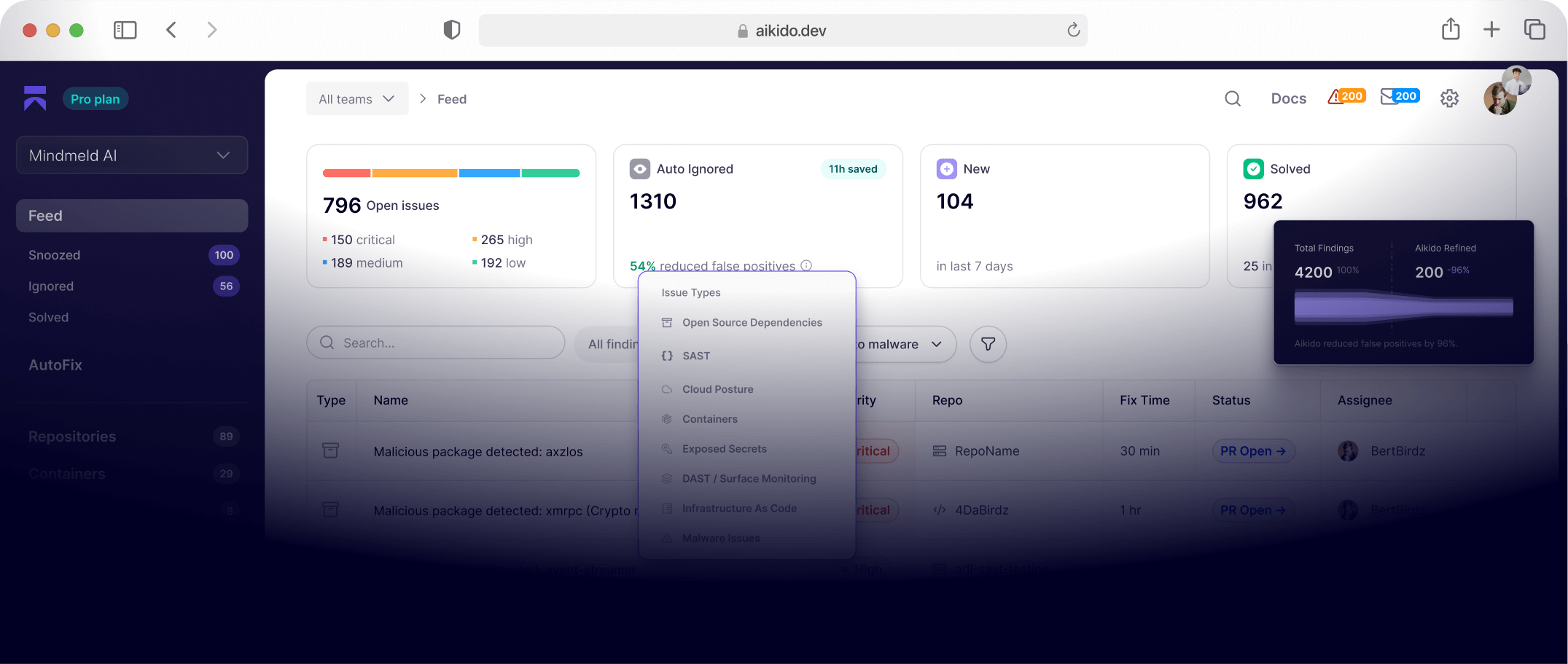

We are seeing this play out in practice. There are three types of report submitters. , There are those companies that use a new approach for legitimate reports. These are reports that use layered AI approaches that combine the strengths of multiple AI models, guardrails, orchestration and context, such as Aikido’s own AI pentesting capabilities. Then there are individuals who escalate their research and report writing with AI as a tool. And finally, there are individuals who are able to upskill by virtue of these AI models, to generate reports that seem technically plausible, but are still completely wrong.

Daniel described it perfectly: more convincing crap is worse than obvious crap.

You can’t dismiss it quickly, you have to investigate it, and you waste real time proving it’s nonsense.

At scale, this stops feeling like a helpful external contribution model and starts to resemble something closer to a denial-of-service attack on the people responsible for security.

And the impact is devastating:

- The Internet Bug Bounty (IBB) paused new submissions because AI has dramatically increased discovery volume beyond what maintainers can handle

- Node.js lost its bounty when funding disappeared, reports still come in, but payouts are gone

- curl removed financial rewards after being flooded with AI-generated reports

This is an old problem, amplified

Casey emphasized that this isn’t a new problem, It’s an old one, just massively accelerated.

“We’re doing stupid things faster with more energy.”

Bug bounty has always had an issue with being a level playing field: one person submits a report, and another person has to validate it. That sounds equal on paper but in practice, it has always been difficult for one person to keep up with validation, even before AI existed. Now, it’s practically impossible.

We’re now in a world where anyone can generate dozens of reports, make them appear credible, and submit them instantly. On the receiving end, however, the constraints haven’t changed. It’s still humans reviewing, triaging, and making decisions.

Open source is the first to feel the impact

Open source is where this pressure shows up first, largely because it was already operating close to its limits. Most projects are maintained by small teams, often volunteers, with limited time and resources, yet they underpin massive portions of the internet.

Add financial incentives, global participation, and now AI-generated submissions, and the system gets overwhelmed.

The IBB program said it directly:

AI-assisted discovery has shifted the balance between findings and remediation capacity

Translation:

We’re finding more bugs than we can handle.

So now the bounty is gone, and yet the expectation of reporting remains. But the question is: is the way bug bounty programmes have been used to effectively scale security teams and improve security posture still viable without financial incentives?

Casey doesn’t necessarily believe so:e:

Every organization should have a vulnerability disclosure program, because if you’re on the internet, people will find issues. But not every organization is in a position to run a public, reward-driven bounty program.

In his words, curl likely shouldn’t have had one to begin with:

“I don’t think every organisation should [run a bounty program]… the curl program shouldn’t have been a bounty program in the first place.”

And yet, Daniel’s experience shows something more nuanced. Daniel views the bounty program as a success, because it incentivized real scrutiny of the code:

“I’ve always thought about it as a success because it’s a great way to actually encourage people to scrutinize the code…”

What happens when you remove financial incentives

You’d assume that when you remove financial incentives, you’d get rid of AI slop, but you’d also reduce the likeliness of genuine vulnerabilities being disclosed.

But when curl removed the financial incentives,something interesting happened. The low-quality, AI-generated noise largely disappeared.

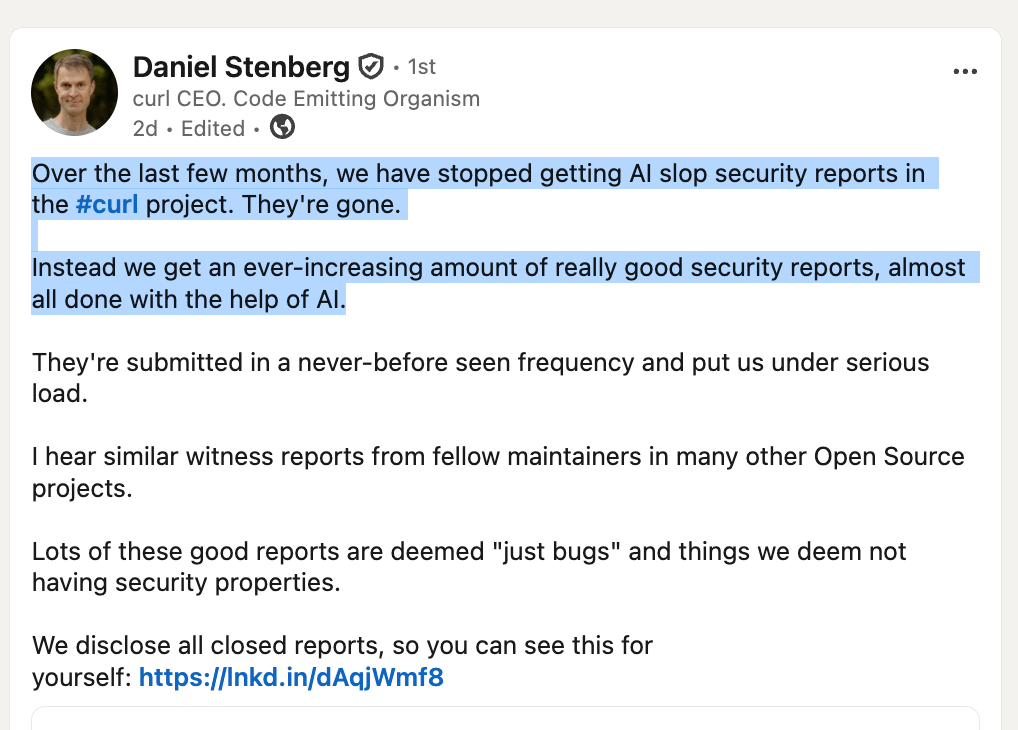

Daniel said: “We have stopped getting AI slop security reports… Instead we get an ever-increasing amount of really good security reports… submitted in a never-before seen frequency and put us under serious load.”

Instead of drowning in low-quality reports, maintainers are now dealing with a high volume of genuinely useful findings, many of which are powered by AI-assisted research. The barrier to entry has dropped, not just for bad reports, but for good ones too.

Which creates a new kind of pressure.

Even high-quality reports take time to understand, validate, and fix. And many of these “good” findings still fall into gray areas, bugs that may not meet security thresholds but still require attention. The result is a sustained, and in some ways increased, load on already constrained teams.

So in a strange way, the system hasn’t been relieved. It’s been refined.

Breaking the system to improve it

And this is where it gets interesting. Because while this is clearly painful in the short term, it might actually be a step in the right direction.

By removing financial incentives, we strip away a large portion of the noise. What’s left is a signal that is, on average, higher quality, more intentional, and more aligned with actual security outcomes.

At the same time, AI is lowering the barrier for researchers to do meaningful work. It’s enabling more people to find real issues, faster than ever before. That combination: less noise, more signal, but still overwhelming volume — suggests we’re in a transition phase.

The current model is breaking under the pressure. But what’s emerging underneath it might be better.

A system where:

- disclosure is expected, not incentivized

- rewards are more targeted, not broad

- and the focus shifts from more reports to better outcomes

We’re not there yet. Right now, we’re in the messy middle, where the old model no longer works, and the new one hasn’t fully formed.

But if this plays out correctly, we don’t end up with less bug bounty.

We end up with a more sustainable version of it.

Hackers aren’t going anywhere

One of the biggest misconceptions in all of this is the idea that if bug bounty struggles, hackers somehow disappear with it.

That’s never how this works.

Hackers don’t stop, they move. They follow opportunity, complexity, and gaps in understanding. When one area becomes saturated, they shift to another, whether that’s APIs, supply chains, or now increasingly AI systems and complex logic flaws.

As Casey pointed out, even if we solved the problems we have today, attackers wouldn’t simply pack up and go home. There will always be new technology, new systems, and new mistakes to exploit.

As long as humans are building software, there will be vulnerabilities.

Which means the need for people to find them doesn’t go away.

However, bug bounty hunters are moving away from the job, partly because of the frustration they have dealing with drained triagers, and partly because of the financial incentive being taken away. They are moving away to consultancy roles and internal research positions instead.

What happens next

Bug bounty doesn’t disappear from here, but it does evolve.

What we’re likely moving toward is a model where vulnerability disclosure becomes a baseline expectation across the industry, rather than something optional or incentivised. Public bounty programs don’t go away, but they become more controlled, more targeted, and more aligned with organisational maturity.

At the same time, AI will inevitably play a larger role in filtering and triaging the growing volume of reports. It won’t solve the problem entirely, but it will become part of how we manage it.

We’ll also see a shift in what actually gets rewarded. As automated systems become better at finding low-level issues, the value of those findings will drop. Instead, incentives will move toward higher-impact work: the kind that requires creativity, context, and a deeper understanding of systems.

That means researchers will increasingly focus on areas like chaining vulnerabilities, exploiting business logic, and breaking complex or emerging technologies where automation still struggles.

In other words, the bar is moving up, researchers won’t go away, but the rewards need to expand equally.