The recent coverage around Anthropic’s latest model Mythos has centred almost entirely on what it could do for attackers. A leaked draft blog post, seen by Fortune, describes the model as able to “exploit vulnerabilities in ways that far outpace the efforts of defenders”. So much so that Anthropic says it wants to tread with caution, and properly understand the model’s potential “near term risks in the realm of cybersecurity” before proceeding.

What followed was predictable: headlines about “AI’s looming cyber nightmare”, cybersecurity vendors warning about the democratization of cyber attacks, and a general acceptance that the balance has tipped.

Ominous, right?

Well, sure at face value it is. But the balance hasn’t tipped. The doomsday framing rests on the assumption that model capability translates directly into attacker advantage. But our data suggests otherwise.

The assumption behind the Mythos narrative

Indeed, we know that AI models will speed up attack workflows. But the effectiveness of this depends heavily on deep system context - something that attackers largely lack.

The cybersecurity capabilities attributed to models like Mythos overlap significantly with what AI systems are already doing in controlled security testing environments. Vulnerability discovery, reasoning about code, multi-step attacks. Our own experience of 1,000 real-world AI penetration tests gives us a window into how performance changes under different conditions.

The pattern is consistent. Whitebox tests, where target application source code is available, surfaced 7x more critical and high-severity issues and ran at roughly twice the efficiency of greybox tests with limited access to source code. This suggests that AI effectiveness is highly sensitive to context, rather than just raw capability.

In practice, that context comes from combining static and dynamic analysis. Looking at code or behavior in isolation only gives a partial view. When both systems are available, systems can connect written code to how it behaves in execution, and the depth of findings change. It also changes the economics. Fewer attempts (and thus tokens) are needed to surface meaningful issues.

The current ruminations around Mythos assume that attackers will benefit more from the latest frontier models. But in practice, that doesn’t take into account that attackers are the ones with limited context. They are inferring system details from the outside, while defenders already have access to how those systems actually work.

Context is the constraint, rather than capability

Indeed, a lot of value is placed on how the model developers themselves describe the capability; the same happened when Anthropic claimed that Claude Opus 4.6 uncovered more than 500 high-severity vulnerabilities in open source libraries. These claims show what the models can do under ideal conditions But there is little talk on how performance changes when operating without full system visibility.

The main variable here is context. Access to source code and internal application logic determines what the testing agents can meaningfully evaluate. Capability alone doesn’t translate into results. Without sufficient static and dynamic code context, even the most advanced models fail to outperform lightweight open source models due to an incomplete understanding of the system they are probing.

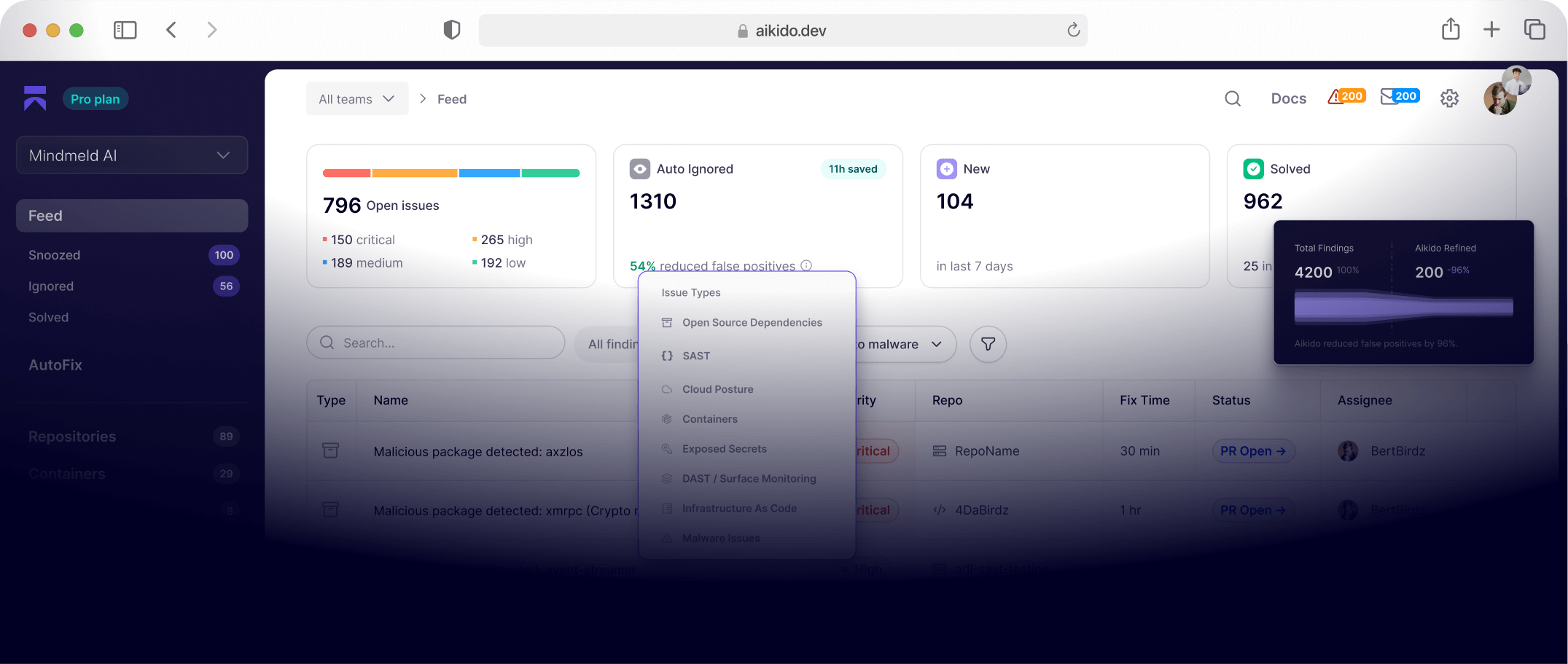

Consider the recent compromise of Axios, one of the most widely used packages in the NPM registry. The attacker didn’t change the source code. They compromised a maintainer account, added a new dependency, and published an update. The attack worked because there was no known CVE to match against, no malicious code pattern to flag, no signature for a scanner to catch. The attack succeeded because every tool in the chain lacked the context to see what had actually changed.

An organization with deep visibility into its dependency tree - knowing not just what packages it uses but what those packages do, how they behave and what a legitimate update looks like, would have had a basis to question that change. Without it, no amount of speed or capability helps. This is why the current “AI favors attackers” framing misses the real point. This is where the approach to AI-driven testing starts to diverge. Given full context across code and runtime behavior, these privileged agentic tools identify issues that skin-deep testing simply misses.

And yet none of this means the defender’s context advantage for code and runtime visibility is permanent. AI will of course lower the cost of acquiring context too; but the current narrative implies there’s been an overnight shift in balance. Building genuine system understanding is slow, complex work, and while AI models will increasingly be able to deduce certain aspects of context, they will never be able to match the clarity that comes from access to actual source code, API and application credentials/tokens and ability to quickly parse internal business logic across application components, microservices and integrations that an organization has in-house.

In retrospect, this all may sound obvious, especially in light of the penchant to publish doomerism around security. But sometimes it takes greater scrutiny of what’s being put in front of us to really consider the actual impact. The general mantra has been that new AI models are going to drastically tip the balance, which is true to an extent; AI will provide speed, breadth and capability to attackers, and there will be a detrimental impact felt by those defending applications, systems and infrastructure.

But the nuance is that effectiveness depends largely on context, and that context is unevenly distributed. Luckily for us, it’s weighted in the defender’s favor. So while attackers may benefit first from emerging frontier AI models like Mythos and Capybara, defenders already have the edge on deep, structural knowledge of how their code actually works. AI is making application security context more valuable than ever. The question is whether defenders will use the advantage they already hold.

Check out Aikido's Mythos-Ready checklist to learn how to apply the defenders' advantage and prepare for threats from emerging frontier AI models.