Deterministic security tools, at this point, have become such a regular part of security that, for a long time, we weren’t questioning the alternatives. With AI becoming a core component of security with probabilistic models, it’s time to revisit determinism and get clear about what it’s needed for. Otherwise, why shouldn’t we just start replacing everything with AI?

In short, we need determinism for its predictability and efficiency. Deterministic tools like SAST give you the same result every time you run them on the same thing, efficiently. So they’re useful in a CI/CD pipeline, defensible in a compliance audit, and build a foundation for everything else. AI is great at logical reasoning, but, due to its unpredictability, best saved for cases when you don’t need repeatable results.

As more probabilistic tools appear on the market, we want to break down how determinism creates sustainable security practices, how AI provides new possibilities, and how the two can be combined for maximum impact.

When security tools need to be predictable

Some security tasks demand consistency and auditability above all else. We see this need for predictability show up across the security workflow.

When a scanner flags a finding, a developer needs to trust it enough to act on it at 11 pm. When a CI/CD pipeline blocks a deployment, that block has to hold up under scrutiny. In a pipeline, your scanner runs on every commit or pull request. If it flags 12 issues on Monday and 9 on Tuesday against identical code, that’s not good. Your pipeline results are no longer trustworthy. Developers start ignoring alerts because the signal is inconsistent, and you can't use scan output as a meaningful gate on merges.

Similarly, regression testing depends on reproducibility. When you fix a vulnerability and rescan, a clean result needs to mean it's actually fixed, not that the model missed it this run. Baseline and drift detection depend on it too, since they need to know whether a new finding reflects a real change in the codebase or just variance in the scanner.

Predictability is also necessary for compliance. Auditors want consistent, explainable findings over time, with a clear record of what was detected, when, and why. A scanner that produces different results on different runs can't provide that.

The practical cost of unreliability in security slowly degrades trust in the tooling itself until nobody takes findings seriously. Reproducibility, auditability, and low false positive rates need to be the baseline that a security team can depend on.

Deterministic security tools

SAST is a quintessential deterministic security tool that scans for known patterns and vulnerabilities. Think hardcoded secrets, known CVEs, dependency vulnerabilities, and injection patterns. It works by parsing code into an abstract syntax tree and tracking how user-controlled data moves through the application from entry points to where it's used. A finding traces back to a specific rule that a human wrote and reviewed, and the rule either matches or it doesn't. Run the same scanner on the same code a thousand times, and you get the same findings.

Repeatability is what makes deterministic tools well-suited for the front of a pipeline (it’s consistent). They're auditable because a human wrote the rules, and you can run them on every commit without thinking about it getting too expensive.

DAST sits on the deterministic end of the spectrum, too. It runs a defined set of attack simulations against a live application and returns consistent, repeatable results. It's a useful baseline check, since it’s faster and cheaper than AI pentesting (for now). Like any deterministic tool, though, it only finds what it has a test for.

We’re certainly not saying that deterministic security, or even just predictable output, is always better. The flip side of what makes deterministic tools reliable is also where they’re limited. They only find what they have a rule for, so novel attack patterns, nuanced logic flaws, and vulnerabilities that require understanding business context fall outside what a deterministic scanner can catch.

Deterministic tools on their own also won’t be able to tell you which issues are more important in context. Some deterministic tools include basic reachability analysis (essentially static call graph tracing) that can confirm whether a vulnerable function is actually called. But if we want to know if this finding is exploitable in our specific application, given our data flows and business logic? Well, that kind of reachability analysis and prioritization requires a reasoning layer on top beyond pattern matching.

Probabilistic security tools

Thanks to the rise of LLMs, we now have new AI-powered security tools like AI scanners, automated triage, and agentic pentesting that are probabilistic by nature. Probabilistic tools (or model-first tools) don't operate on fixed rules. Instead, they treat code as text and reason over it.

Because they’re not bound to fixed patterns, an AI follows logic across functions, infers intent, and can surface vulnerabilities. Business logic flaws require understanding what the code is trying to do, not just what it literally says. Probabilistic tools excel at this, and AI continues to improve. AI can find novel vulnerability classes and context-dependent bugs that would have required a skillful human review to find before.

However, by their nature, these probabilistic models are unpredictable and inconsistent. Outputs can (and likely will) vary between runs. LLMs generate output by predicting the most likely next token based on probability distributions over their training data, which means the same input can produce different outputs depending on temperature, sampling behavior, and what else is in the context window. This variation is fine, even helpful, for pentesting and finding new vulnerabilities. But for your CI/CD pipelines, it isn’t.

There’s also a cost problem when you try to use a probabilistic reasoning model as a blanket code scanner on every commit. James Berthoty at Latio did some informal testing that showed a probabilistic AI model spending 17 minutes and 155,000 tokens to surface an issue that Opengrep, a deterministic SAST engine, found in 30 seconds. Across every pull request in an active codebase, that trade doesn't make sense.

Why we need both

When you combine deterministic and probabilistic models, however, and use each method to its strengths, we get a security pipeline whose whole is larger than the parts. In practice, you want deterministic scanning running on every commit, catching known vulnerability classes fast and consistently, then AI reasoning sits on top, handling triage and context.

Together, they cover the spectrum from "what we know is dangerous" to "what we haven't thought to look for yet.” We expect the latter category to grow. As AI gets embedded into more codebases and attackers gain access to the same reasoning capabilities, many vulnerabilities won't have a rule written for them yet, so we’ll need tools that can keep up.

Another reason you need both tools is that they cover the same issues at different levels. DAST and AI pentesting, for example, work at different layers of the same problem. DAST excels at fast, deterministic checks that need to run continuously. You need to know as soon as possible if you have obvious open ports or pages that shouldn’t be public. AI pentesting is slower and costs more per run, but it operates at a fundamentally different depth. An IDOR that requires three authenticated steps to reach won't show up in a DAST scan, but it will show up in a good AI pentest. You can get the easy stuff out of the way quickly with DAST, then AI pentesting follows up with the more complex stuff.

How Aikido uses both

We've built Aikido on the premise that deterministic and AI-powered scanning solve different problems at different layers of the stack. Since day one, we elected to use the tool that produced the best results at each step.

The deterministic foundation is Opengrep, the open-source code analysis engine that Aikido helps lead and maintain. On top of that, we've developed taint analysis and curated rulesets precise enough to integrate directly into CI/CD pipelines without generating noise.

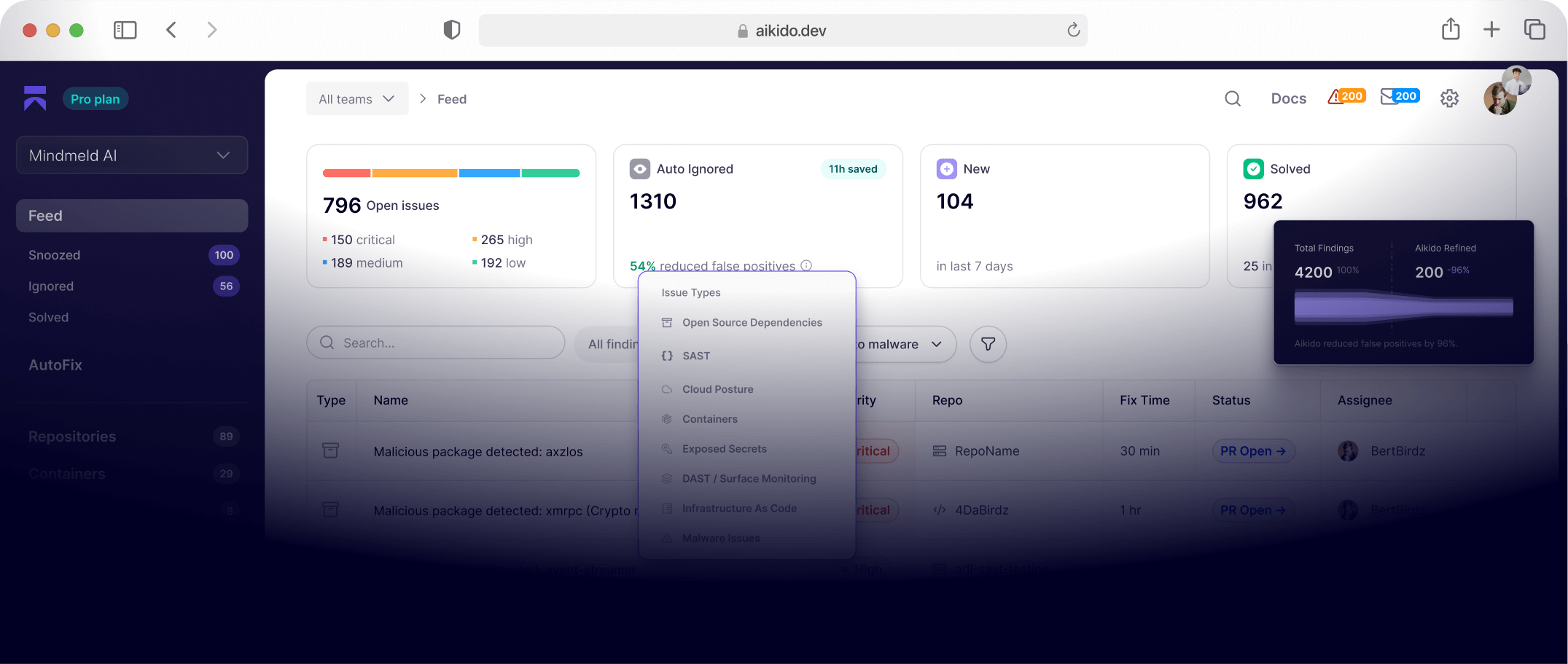

Where we love probabilistic security is making sense of this data. AI reasoning sits on top of the deterministic foundation, handling the problems that rules can't map to. In Aikido, this happens through AutoTriage, which sits downstream of SAST scans and makes determinations about exploitability and severity that a rule engine can't make on its own.

AutoTriage runs in two stages. First, Aikido's reachability engine filters out false positives before an LLM ever looks at the code. It checks whether vulnerable code paths are actually reachable, whether sanitization exists between source and sink, and whether the affected dependency is used in production or only in tooling or pipelines. This first step alone suppresses a significant portion of alerts compared to the average SAST scanner.

For the complex cases that survive that filter, reasoning models come in and evaluate the control and data flows in context. Internally, we found that this approach correctly identifies roughly twice as many false positives on complex cases compared to non-reasoning approaches. A SQL injection finding might be safely downgraded because the input originates from a trusted upstream source. A NoSQL injection on a login endpoint gets upgraded to critical because the attack path is trivial and the impact is direct.

The reason this works is context control. Running an LLM over an entire codebase and asking it to find vulnerabilities is what produces inconsistent results. The model loses the thread, widens its guesses, and your false positive rate climbs. Aikido's approach narrows what the AI sees before it ever reasons. Taint analysis traces how user-controlled data moves through the application, and endpoint-awareness gives the model the full stack trace and the intent of the code. Is this a web API? A command-line tool? What's it supposed to do? With that context locked in, the AI isn't guessing. It's evaluating a specific, bounded question. That's how you get AI reasoning power without giving up reproducibility.

Finally, because AI models are well-suited to find complex and logic-based attack patterns, we have found them highly effective for pentesting. Aikido Attack is AI pentesting that puts probabilistic reasoning to work on the live application. Agents complete tests in hours rather than weeks and regularly find deeper logic flaws, including IDORs, auth bypasses, and e-signature forgery, some of which even human testers miss. And now, with Aikido Infinite, agents can pentest every release.

For confirmed true positives, from SAST or pentesting, Aikido also uses AI to reason about the solution. AutoFix generates a targeted patch and opens a pull request, another AI capability that requires reasoning and context to do.

At Aikido, determinism handles predictable coverage at scale. AI handles the judgment calls that rules can't make.

What’s next?

Security needs both deterministic dependability and AI creativity. Determinism gives you consistent, auditable, and trustworthy results at scale, while AI gives you the depth, creativity, persistence, and reasoning to clear out false positives, reduce noise, and find unusual vulnerabilities. Both probabilistic and deterministic tools have limitations, but the real problem is when they are used in the wrong spot. Things are going to break down when you put a reasoning engine in a job that needs a pattern matcher (or vice versa).

The broader direction is clearer than any individual product category. Deterministic tools are getting better because AI sits on top of them now, handling prioritization, triage, and fixes that rules alone couldn't make. Probabilistic tools are getting better at finding complex logic flaws and novel attack paths, and over time, they'll get more cost-efficient too. But for continuous baseline checks, the economics of deterministic scanning aren't going away. You want something fast, consistent, and cheap running on every commit, and that's still a pattern matcher.

The teams building the most durable security posture are the ones using both deterministic and probabilistic tools in the right order and in the right places, not betting on one.