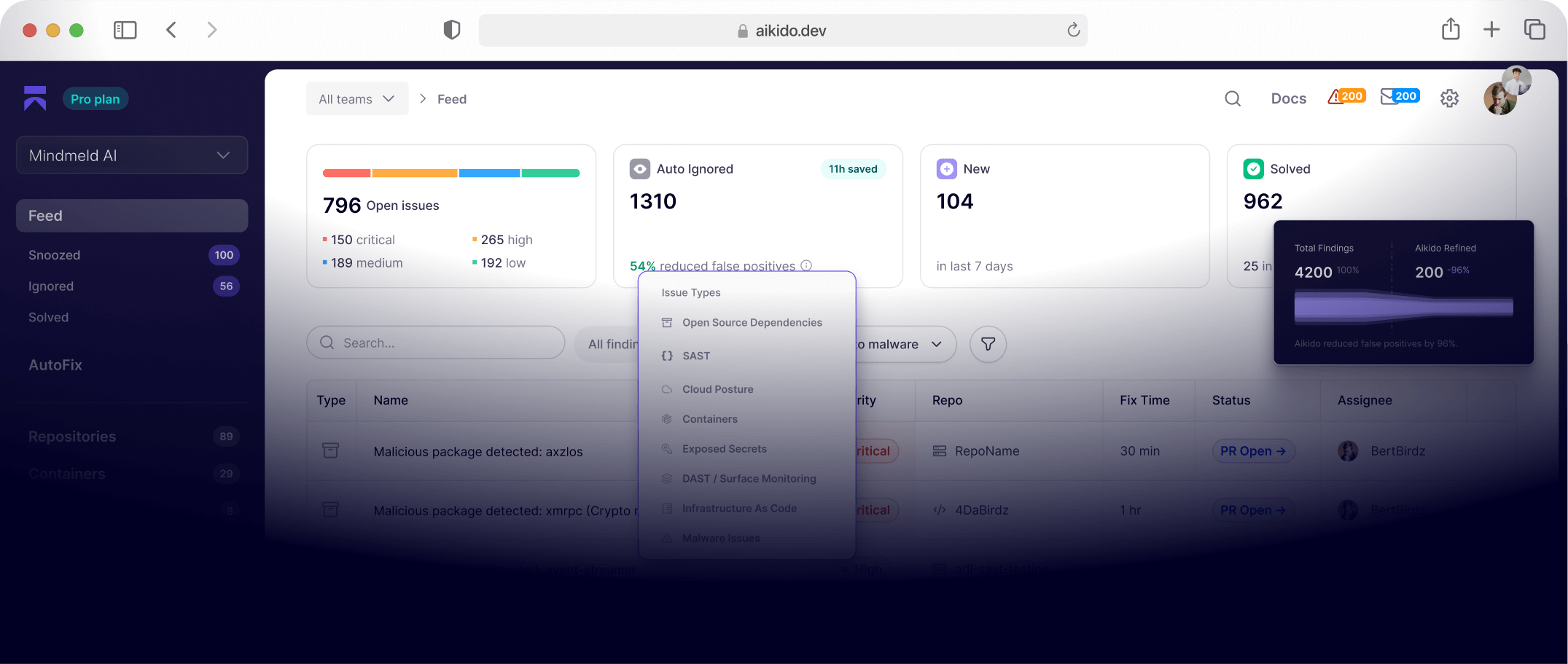

You’ve heard all of the hysteria around AI agents and all the seemingly limitless possibilities. And while those possibilities are all well and good, you’re only really interested in agentic AI capabilities that address your actual problems head on.

And then when you think of all the productivity gains and ROI benefits, you stop and think, “okay this is great but, what if these agents go outside of their scope?” - that’s regardless of whether you’re deploying your own AI agents internally or benefitting from an external vendor’s AI agent capabilities.

And it’s a valid question to ask. Agents, just like other AI capabilities, need constraints. Without them, they can run wild. Agents are curious by design. Like a toddler, they’ll try every door they can reach. In many cases you need them to explore, but you also need to ensure that the doors that shouldn’t open are physically locked.

When it comes to cybersecurity, this matters even more: the minimum safety requirements for AI agents need to be even more stringent. For Aikido Attack, our AI pentesting capability, we’ve considered every layer to prevent agents from going out of scope. This covers elements such as accidentally testing production and losing control.

Going out of scope is one of the key topics security leaders and engineers are asking us about, and it’s something we considered when developing our platform at the outset. Naturally, as a cybersecurity company, we wanted to get this right.

It’s worth remembering that agents are expected to attempt unexpected or risky paths, but that guardrails exist to contain that behavior, not prevent it.

Aikido Attack and Infinite work on a layered approach, using both hard boundaries and soft boundaries. Here are the key elements you need to know about:

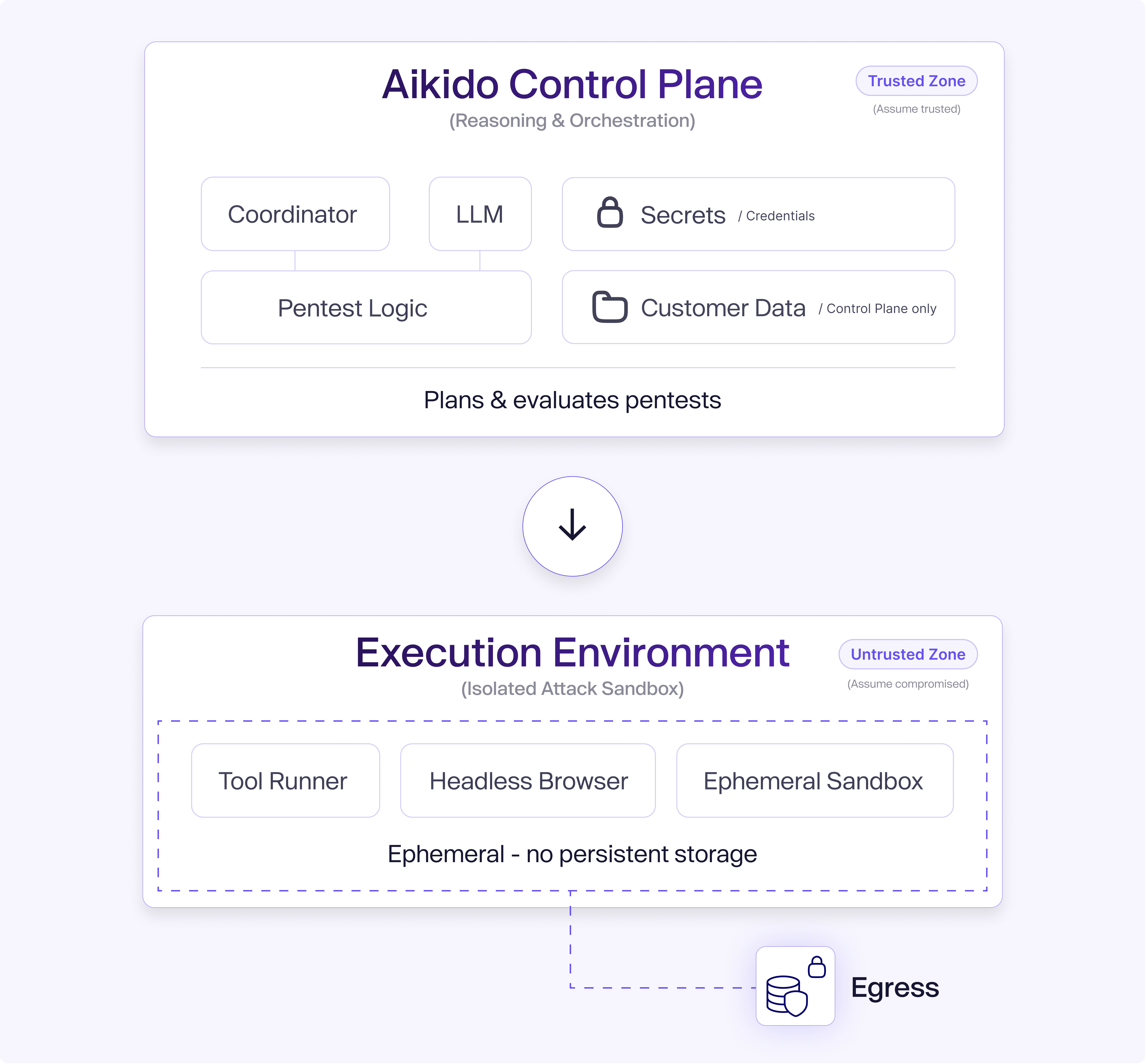

Layer 1: Hard architectural separation: Control plane vs execution

Aikido’s system is architected with a strict separation between the system that plans and evaluates pentests (the control plane) and the environment that actually executes actions (the isolated execution sandbox).

All reasoning, orchestration, and access to sensitive data happen in the control plane. Tool execution, browser automation, and network interactions happen in a separate environment.

The separation exists because we assume that execution can misbehave and therefore, any impact must be contained. It’s for this reason that the execution environment has no access to orchestration secrets, internal infrastructure, or control-plane systems.

Layer 2: Runtime scope enforcement

Production is never assumed as in-scope

Our system never assumes production is in scope to be attacked. Pentesting is expected to run against staging and test environments only. Production has to be explicitly configured as in-scope, and even then, this would be reviewed and acknowledged before anything runs.

We’ve seen our guardrails work in practice. In one case, an agent followed application behavior that would have led it to production infrastructure. The hard boundary we have in place blocked the request at the network layer. However, we could see that the agent tried. This blocked attempt is proof that our guardrails work.

Only domains that are allowed can be accessed

Our agents can only interact with explicitly configured domains. If a domain is not allow-listed, it’s blocked at the network level. This is something you can set up yourself, specifying which domains are attackable or accessible. Put simply, we block domains by default to prevent the agent from interacting with servers that they’re not supposed to interact with.

This means we don’t rely on prompts or humans for scope enforcement. Aikido technically enforces it ourselves.

Accidental scope drift is blocked

Back to our toddler analogy. Even though most other safety controls mean that the agents won’t drift from scope, there’s a limited number of agents that, well, just do. Especially when you have 250 agents running at the same time.

A classic example of this is if an agent is redirected to an external application through a link, they assume they’re still on the same page, but they’re actually on another website. So suddenly they’re on X or Reddit, and they assume this is part of the scope.

This is why you need hard checks in place to protect the agents from, well, themselves. As Phillippe Dourassov, AI Pentest Lead at Aikido Security, puts it:

“There’s going to be five percent of agents that aren’t always sensible, and that’s why we make sure that we deal with this five percent”.

Layer 3: Prompt injection and data exfiltration

We know that prompt injection is a key risk in autonomous AI systems, whereby an attacker inserts malicious instructions in content that the agent reads. The agent interprets those instructions as legitimate guidance and follows them.

That could mean content that urges agents to send the source code or internal data to somewhere where it shouldn’t be. This vulnerability comes from being exposed to untrusted content and then acting on it. Aikido removes both of those options.

First, Aikido’s agents do not have open internet access. This means agents cannot go on a Google search to find out how a type of technology works, or go on Reddit and take on instructions to do something unsafe. The only content they process is what exists inside the scoped application itself.

Second, even if malicious instructions were somehow planted inside the target application, the agents are still not permitted to exfiltrate data. Network-level restrictions prevent outbound connections to random destinations, so the agent cannot upload source code to Google Drive, or post to an external endpoint, or send data to an attacker-controlled domain.

We enforce this at the network layer by intercepting and controlling both HTTP and DNS traffic from agents, preventing them from resolving or communicating with domains that aren’t explicitly approved.

So, in the worst-case scenario, where a model misinterprets instructions, it’ll still be unable to send anything outwards.

One edge case worth mentioning is if a customer deliberately injects malicious instructions into their own environment (although we’re not sure why this would be the case?!), the agent may well process this. But even then, the only impact will be on the customer’s own test. There’s no cross-tenant risk, infrastructure exposure or data leakage beyond what they already control.

Layer 4: Isolated sandboxes for each agent

Each of our agents have their own little isolated sandbox (think: toddler in playpen). That means they’re separated from both Aikido's internal infrastructure and from other agents that are running at the same time. This means they’re separated from access to Aikido’s network, infrastructure and databases, and cannot interfere with or influence other active sessions.

If something behaves unexpectedly during a test, the impact is contained in that single sandbox - preventing both impact across agents and cross-tentant exposure.

Layer 5: Operational safeguards

All requests are rate-limited and load-aware, ensuring that tests do not overwhelm target systems or trigger a barrage of alerts.

In addition, tests can be paused or terminated immediately at any time. Customers can see what agents are doing in real-time. Every request and action is visible. This means teams can intervene if they deem it necessary.

Setup validation

Configuration mistakes are more likely than malicious behaviour. It’s for this reason that, before tests begin, Aikido uses pre-flight checks to validate authentication and reachability. If someone appears misconfigured or resembles a production environment, warnings are surfaced early. This means safeguards are designed to catch human error before execution starts, rather than relying on runtime controls to fix avoidable setup mistakes.

Soft boundaries

Our layered approach means we also have soft boundaries. This is where you wouldn’t need a domain to be accessible for the agents to use it.

For example, if you had an authentication portal, then within that portal, you may want the agents to use the authentication to log into the application, but you don’t want the agents to attack the portal itself.

The soft boundary means the agents can still reach the authentication portal, but are specifically instructed not to attack it.

How scope is enforced: Human vs AI pentesting

In a traditional pentest, scope is enforced through documentation, contracts, and professional judgment. Testers are briefed on which environments are in scope. This works well in practice, but staying within the boundaries depends on the tester’s discipline and experience.

For instance, if a tester follows a redirect into the wrong environment or misidentifies a system, the issue is typically discovered later through logs or review.

With AI pentesting, scope is enforced through technical controls. If a domain is not allow-listed, the connection is blocked. If production is not explicitly selected, it’s not reachable, and if a redirect leads outside the scope, then the request fails automatically.

Both approaches are effective. The advantage of technical enforcement is that it reduces reliance on documentation and interpretation.

To benefit from AI pentesting, which has already shown better results than manual pentesting, in terms of finding critical and high-severity issues, try Aikido Attack now