AI pentesting has been making waves and rivals the power of human hackers in ways we weren’t expecting. But frequently, companies are looking for pentesting to achieve and support their compliance certifications.

In the past, auditors have rejected results from automated tools. But that’s not because a human was required to sit and do all the testing, but rather that those old tools weren’t doing anything close to proper pentests. An AI pentest running 250 orchestrated agents against your application closely matches how human pentesters perform their assessments. This means exploring the application, understanding how features work, finding ways to break them, and validating that the issue is actually exploitable before putting it in the report.

True AI pentests today are being regularly accepted by auditors. In this post, we’ll discuss misconceptions about AI pentesting and how it relates to compliance, and explain how and when you can use AI pentesting to meet your compliance requirements.

What do you need for compliance pentesting, really?

When an auditor asks for a penetration test, they're asking for documentation that your application was tested against a defined set of attack vectors and testing methodologies, that findings were validated and recorded, and that you have a remediation plan for anything critical. Whether it was a human that sat in front of a terminal for two weeks or AI agents that spent a day on it isn’t really the question. It is also the case that infrastructure changed much more slowly in the past, with quarterly releases, so the thought of performing weekly penetration tests was kind of absurd. That is most definitely not the case now.

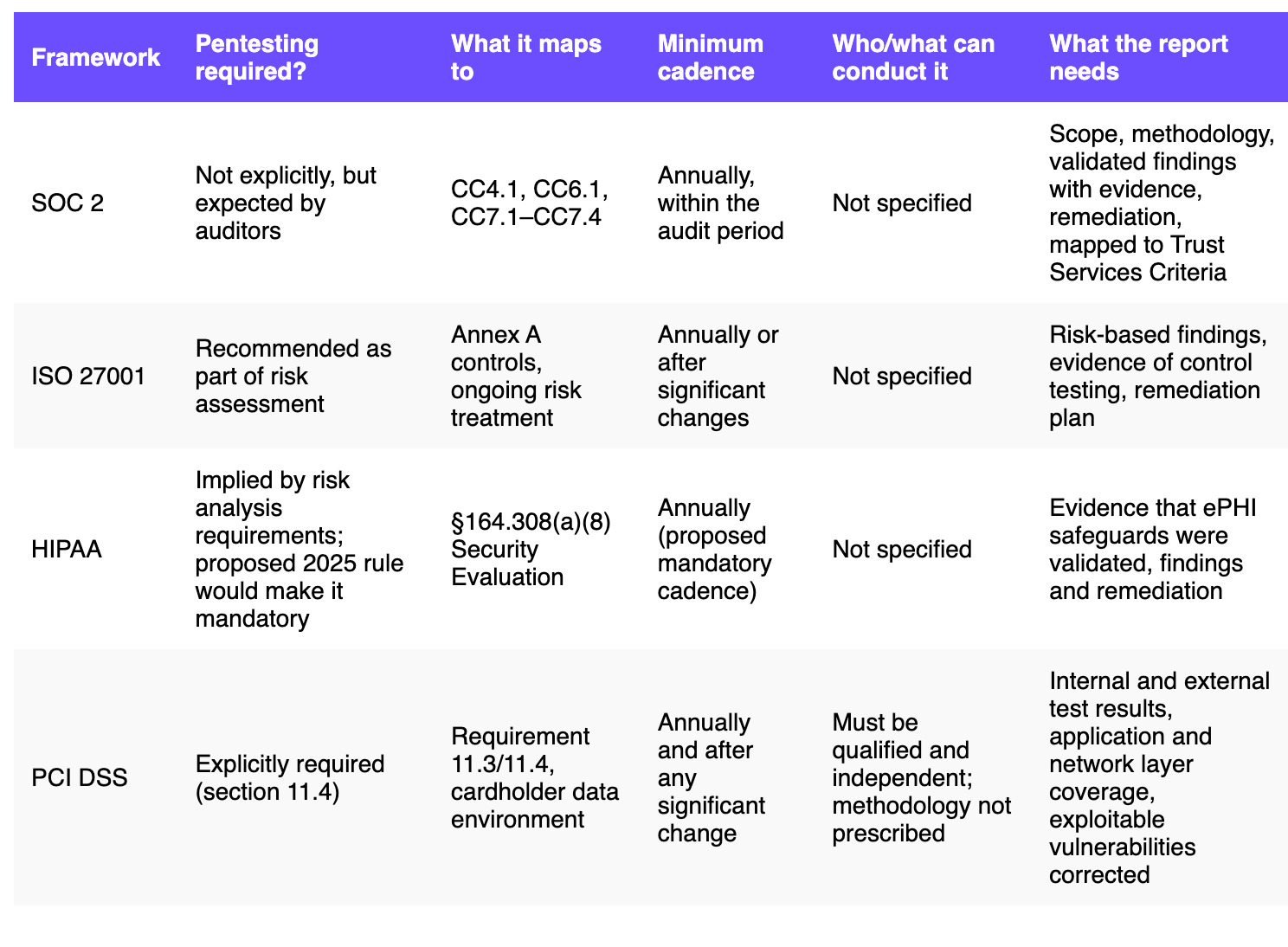

The most common frameworks that require or recommend pentesting are SOC 2, ISO 27001, HIPAA, and PCI DSS. For most of these, none of them explicitly require a human to have conducted the test. What they specify is coverage, methodology, and documentation. PCI DSS is less straightforward— its guidance defines penetration testing as 'essentially a manual endeavor' and states that automated tools alone don't satisfy the requirement (we'll look at what that means for AI pentesting later).

Let's look at SOC 2. The framework doesn't actually mandate pentesting at all. What it does require is that you demonstrate your controls are effective, particularly around logical access (CC6.1), change management (CC8.1), and risk mitigation (CC7.1 through CC7.4). Auditors have settled on pentesting as the most credible way to evidence those controls, because it shows that someone actually tried to break them. A pentest report that maps findings to these criteria, documents what was tested, and shows remediation of anything critical, is what satisfies the requirement. As it turns out, the framework says nothing about who or what conducted the test.

ISO 27001 follows a similar pattern, recommending pentesting as part of ongoing risk assessment.

HIPAA has historically treated pentesting as a best practice rather than a hard requirement, but that's changing. In December 2024, HHS proposed updates to the HIPAA Security Rule that would make annual penetration testing mandatory for all covered entities and business associates, with testing required to be performed by qualified personnel with appropriate cybersecurity knowledge. That rule is expected to be finalized by mid-2026. If you're in healthcare, check directly with your compliance team on current status.

All the frameworks require a structured report with an executive summary, a methodology section, validated findings with evidence and reproduction steps, severity ratings, and remediation guidance. The OWASP Web Application Security Testing Guide is the benchmark most testers follow for coverage (and it's a long list). Even a human team working with a week's budget can't realistically work through all of it in any depth. They have to triage and prioritize the most important things. Frequency and breadth are constraints that no longer limit our testing scope.

The assumption that compliance pentesting means human pentesting isn't written into most frameworks. It's been true by default because, until LLMs, no tech could come close to actually doing them. For teams in heavily regulated sectors with specific compliance requirements, it's worth having that conversation with your auditor directly. For most, however, the report won't raise any flags. AI pentesting covers the requirements.

Where AI pentesting already delivers for compliance

Audit trails

The audit trail from an AI pentest is extensive and detailed, often better than many human pentest reports. Every request sent, every payload tried, every action taken by every agent is logged. You can see exactly what was tested, how the test was conducted, and what was found. Most human pentest reports give you findings and a methodology section. They’re not giving you a complete trace of every step taken. If your auditor asks, "How do we know they tested X?", the report generated from an AI pentest can actually show the log for that exact thing.

Test Coverage

AI pentesting covers significant ground. For those asking, "How do we know it tried everything?" the concern applies equally to human pentesters. A manual pentest report that comes back with zero findings and no audit trail of what was tested is being taken entirely on faith. A kind of servitude to the ritual of the annual penetration test exists. You can't prove a human tried everything, anyway. With an AI pentest, you can enumerate detailed test coverage through the logs.

Agents can work through the full OWASP Top 10 in hours. They test authorization checks across every endpoint, not just a representative sample. They try every attack vector on every feature, not just the ones a human tester had time to reach before the engagement ended.

AIs are improving exponentially in their ability to reason and understand code. They’re finding new, context-dependent vulnerabilities that humans have missed for years. Skeptics assume that AIs can’t handle business logic vulnerabilities. This is no longer the case. In practice, agents read the codebase, understand the intended behavior, and find creative ways to break it. The phrase “If all you have is a hammer, every problem looks like a nail” is apt here. Even if a human tester is really good at finding XSRF vulnerabilities and earns a six-figure bug bounty, the truth of the matter is that AI testing brings a sack full of hammers to the job.

In Aikido Security's head-to-head benchmark across four non-trivial web applications, AI agents found twice as many broken access control vulnerabilities as senior human testers. They also uncovered an e-signature forgery in a payment application that the manual testers didn’t catch at all. The AIs admittedly had a huge advantage because they had access to the source code. An AI absorbs a full codebase almost instantly, while human testers usually work without it for logistical and NDA reasons. But white box, grey box, or black box testing is definitely elevated by the parallelism that agentic pentesting brings to the table.

The benchmark also found that human testers did better on probing for poor configuration hardening and identifying compliance hygiene checks. Since then, AI pentesting has continued to improve. Aikido’s AI pentesting, for example, regularly catches complex IDOR vulnerabilities, which involve authenticating as real users and following long workflows end-to-end.

Third-party integrations, particularly complex OAuth flows and SSO implementations, are harder for agents to navigate consistently. Aikido’s AI pentesting has put in the effort required to solve these issues, but don’t take it for granted that all AI pentesting products can do this.

Reports

The report format maps directly to what compliance teams need. SOC 2 and ISO 27001 get a full PDF with evidence, detailed remediation guidance, and reproduction steps for re-testing after remediations are applied. HIPAA requirements are covered.

Turnaround times for AI pentesting are on the order of hours (definitely less than a day), which is really helpful when you're on a certification timeline or responding to an audit request that includes in-scope assets that have not been tested previously.

What can AI pentesting not do for compliance?

While AI pentests are increasingly being accepted, the tech is still quite new, and some industries and their regulators are still figuring out their stance on the issue.

PCI DSS is more prescriptive than SOC 2 or ISO 27001 and explicitly requires penetration testing at least annually, with specific coverage of cardholder data environments. Its official guidance for pentesting, last updated in 2017, describes penetration testing as 'essentially a manual endeavor' and states that running automated tools alone does not satisfy the requirement. The spirit of the requirement has always been about active exploitation, validated evidence, and judgment applied to results. Human pentesters can use AI pentesting as a tool to handle a large chunk of the heavy lifting on the application side. That said, PCI DSS also requires network-layer and segmentation testing alongside application-layer testing, which AI pentesting won't cover anyway.

For some financial services regulators or government sector requirements, companies will need to check directly with their auditor in order to gauge their openness to seeing continuous monitoring and testing as not just equivalent to point-in-time testing, but significantly superior evidence of security controls and program rigor.

The clearest examples of this are CREST in the UK and FedRAMP in the US. Both have the same underlying issue that they require an accredited human organization to stand behind the assessment, regardless of how the testing was conducted. CREST certification originated in the UK but is an international accreditation program and is a condition of procuring pentesting for many UK-regulated industries, and AI pentesting tools don't yet carry it today. Aikido is in the process of getting its AI pentesting CREST certified, so this is likely to change soon.

FedRAMP, which applies to cloud service providers selling to US federal agencies, requires assessments to be conducted by accredited third-party assessment organizations (3PAOs). Recent RFCs for FedRAMP 20x, however, indicate that the program is working on finding ways to modernize its approach to vetting SaaS solutions to protect critical infrastructure and government applications and services.

Physical security testing and social engineering are fully out of scope (phishing tests are required for FedRAMP). We’re a ways off from having AI pentesters walking around turning doorknobs to see if they are locked and sending phishing emails (probably for the best).

We’re more likely to see accredited firms leveraging AI pentesting in discrete places as tools instead of full replacements for their reconnaissance and pentesting. Today, AI pentests can be used in a partner model where an accredited firm reviews and co-signs the work and testing artifacts. That approach is worth exploring if you're operating in either of those markets.

Don’t auditors reject AI pentesting tools as scanners?

The most common objection isn't even about AI pentesting. The problem is automated scanners that masquerade as AI pentesting.

For years, less scrupulous organizations have tried to pass off basic vulnerability scanner output as a pentest report. Tools like Nessus or OpenVAS produce long lists of flagged issues with severity ratings that look credible on paper, but nothing has been validated, exploited, or contextualized. They conflate the concept of a possible vulnerability with a demonstrable attack path. Auditors have seen enough of these to be skeptical of anything that smells like a scan dressed up as a pentest. So you have to make sure your AI pentest is truly AI pentesting, rather than a scanner or DAST wearing AI lipstick.

A real AI pentest actually exploits and confirms vulnerabilities against a live target before surfacing them in a report. You can tell the difference in the report language and details. Validated findings come with proof-of-concept evidence and reproduction steps showing how the exploit was actually executed, whereas unvalidated scanner findings just describe a potential issue with a generic severity rating and include no proof that anything was actually tried. If a report comes back with hundreds of findings and none of them show exploitation evidence, you likely have a scanner on your hands, regardless of what it says on the tin.

This goes back to what we were saying about PCI DSS. The 2017 language describing pentesting as 'essentially a manual endeavor' was written specifically to address the issue of organizations submitting scanner output as a pentest report. The guidance was drawing a line against that practice, not anticipating a world where AI agents actively exploit and validate findings the way human testers do. While AI pentests don't all of cover PCI DSS pentesting requirements (like network-layer and segmentation testing), AI pentesting tools can help organizations perform application pentesting more efficiently, and we may see these regulations update their wording in the future to address the nuance. The industry tends to move faster than compliance frameworks.

Continuous compliance

Beyond the compliance checkbox, point-in-time or snapshot pentesting is a broken model for anything that ships code more regularly than once per year.

A yearly pentest tells you what your application looked like on the day or week the test ran. But your development team likely pushed new changes the very next day. Three months later, the compliance report is still valid on paper, but your attack surface has changed significantly. The 85% of CISOs and engineering leaders in our survey who say findings are outdated at least sometimes aren't wrong about their situation. The lag is palpable and high-risk.

Continuous pentesting changes your point-in-time assertion into a living record. Instead of telling an auditor "we ran a pentest against production assets in March," you can show them a security testing history that sits right alongside your deployment history. And not just in production, but also in the lower environments. Every change impacting your attack surface was tested, so issues were caught and fixed fore they reached production.

Banks and heavily-regulated industries are forced currently to slow down release cycles, specifically to get features and functionality pentested before being shipped. Continuous AI pentesting changes that, because testing runs in stride with your deployment cadence, checking only what changed, so releases don't have to wait for security reviews.

See what an audit-grade AI pentest looks like

Auditors are checking that a test happened, that it followed a defined testing methodology, that test findings were documented with evidence, and that critical issues were addressed. An AI pentest report satisfies all of those requirements. The frameworks that define what counts as a test report and compliance artifact don't specify who or what ran the test.

If you're working toward SOC 2 compliance, ISO 27001, HITRUST, or a similar certification and want to see what the report looks like before committing, you can request a sample report or run a feature scan against your application. Most teams find the AI pentest format isn't a surprise to their auditors at all.

At Aikido, we’ve seen really good outcomes with our customers using AI pentesting with compliance. While we promise to do a manual pentest if your AI pentest is rejected by an auditor, so far, we haven’t seen it happen. Talk to us today to unlock fast, compliant pentesting today.

FAQ

Does AI pentesting work for SOC 2 compliance?

Yes, in most cases. SOC 2 doesn't specify who or what conducts a pentest, only that testing happened, findings were documented with evidence, and critical issues were addressed.

Will auditors accept an AI pentest report?

Most will, provided the report includes validated findings with proof-of-concept evidence, a methodology section, severity ratings, and remediation guidance. The main risk of rejection is submitting automated scanner output dressed up as a pentest, not a genuine AI pentest.

What's the difference between AI pentesting and automated scanning?

Automated scanners pattern-match against known vulnerability signatures and flag potential issues without confirming whether they're actually exploitable. A genuine AI pentest reasons about how the application works, attempts to exploit findings against a live target, and only surfaces vulnerabilities that were actually confirmed.

Does SOC 2 require a human pentester?

No. SOC 2 is outcomes-based, meaning it defines what your controls must demonstrate based on your written security policies rather than how testing must be conducted. The framework maps to Common Criteria controls like CC4.1 and CC7.1, and a well-documented AI pentest report satisfies those requirements.

Can AI pentesting replace manual pentesting for compliance?

For most SOC 2, ISO 27001, and HIPAA programs, yes. Certain regulated environments, like UK industries requiring CREST certification or US federal agencies requiring FedRAMP authorization, have accreditation requirements that currently need a human to co-sign the work.

How often do I need to run a pentest for compliance?

Most frameworks expect annual testing at minimum, plus retesting after significant changes are introduced to your application or infrastructure. AI Pentesting can cover this for SOC 2 and ISO 27001. PCI DSS a the more prescriptive framework, explicitly requiring both internal and external tests annually and after any significant change to the cardholder data environment. AI pentesting covers much of the application-layer portion of that requirement, but needs to be used by a human pentester. Network-layer and segmentation testing require separate coverage, typically from a human pentester.

What frameworks explicitly require penetration testing?

PCI DSS explicitly requires it under section 11.4, and FedRAMP requires it as part of cloud service provider authorization. SOC 2, ISO 27001, and HIPAA don't mandate it outright, but auditors routinely expect it as evidence that security controls are working.

What does a pentest report need to include for compliance?

At minimum: an executive summary, a methodology and scope section, validated findings with proof-of-concept evidence and reproduction steps, severity ratings, and a remediation plan. For SOC 2 specifically, findings should map to the relevant Trust Services Criteria.

Is AI pentesting accepted for ISO 27001?

Yes. ISO 27001 recommends pentesting as part of ongoing risk assessment but doesn't specify how it must be conducted. A report that documents what was tested, how, and what was found satisfies the framework's evidence requirements.

What are the limitations of AI pentesting with compliance?

Physical security testing and social engineering are out of scope for any application-focused pentest, AI or otherwise. PCI DSS needs a human pentester for network and segmentation testing at a minimum. Industries with specific accreditation requirements, such as CREST in the UK or 3PAO requirements under FedRAMP, may need additional steps before an AI pentest fully satisfies their compliance obligations.