Last month, the Mexican government was hacked. 150GB of government data was stolen, including 195 million taxpayer records. This attack exploited a couple of dozen vulnerabilities across ten institutions. In the past, this would have likely taken a skilled team months to crack.

But of course, we’re living in a new age. This attack was executed by one person and their Claude Code assistant. In just over a thousand prompts, this hacker and their AI sidekick used full lateral movement, automated exfiltration, exploit scripting, and credential stuffing to pull off the maneuver. Imagine the damage if this attacker had used fancier tools and had a whole team!

What this attack confronts us with is that people can suddenly call themselves hackers and pull off big heists with just commercial AI subscription and persistence. This is a whole new ball game, and AI tools have handed a massive power-up to whoever moves first. Hackers now have supercharged toys. But, AI can also give the same superpower to defenders and protectors.

Attackers already have their superpowered toys

Attackers have been playing with these super toys for a while, longer than many security teams have been thinking about AI defense.

According to CrowdStrike's 2026 Global Threat Report, AI-enabled attacks surged 89% year over year. The average time from initial access to lateral movement now sits at 29 minutes. The fastest observed breakout in their dataset happened in 27 seconds.

AI is also pulling in a whole new generation of threat actors. Hackers have traditionally had to be good at some highly skilled tasks, except for maybe phishing and basic credential stuffing. Writing exploit code and troubleshooting malware are just prompting problems now. A single bad actor can generate an entire toolkit with the help of an AI assistant. The skill floor dropped fast, and as AI gets smarter, the attackers don’t have to be.

The Mexico attacker sat maybe somewhere around the middle of the skill spectrum. They had enough knowledge to achieve initial access independently, and they crafted a working jailbreak through persistence, reframing the attack as a bug bounty engagement until Claude relented. But there was some evidence that this attacker wasn’t a pro. They left the entire conversation log sitting in a public location (a sophisticated attacker covers their track better). The logs also showed the attacker asking Claude in real time which other agencies to hit next, suggesting the campaign was partly opportunistic rather than a well-crafted plan from the start. They had enough skill to get in the door, but Claude did the real work here.

While the attacker had some hacking knowledge, a new wave of hackers using AI have very little technical knowledge. The FortiGate campaign, documented by Amazon Threat Intelligence in February 2026, is an example. An attacker with relatively low technical skills compromised over 600 FortiGate firewalls across 55 countries in five weeks. No zero-days. No novel techniques. The hacker got initial access by scanning for exposed management ports and trying commonly reused credentials. Then the AI took over, creating the attack plans, writing the tools, and in some cases, executing offensive tools without the attacker approving each command. When the attacker ran into targets they couldn't handle, they just moved on. The AI made them look capable until it couldn't, and then their actual ability showed.

Attackers used to have mad skills. They don’t need to anymore, and the barrier to entry will continue to fall.

Defenders need their own upgrade

Protectors can’t rely on the tools and systems from before. What the Mexico and FortiGate attacks confirmed is that AI can reason through complex vulnerability chains fast enough to make them exploitable at scale. The same AI that wrote exploit scripts and mapped lateral movement paths for a lone attacker can, and needs to, work in reverse for your security team. Give that capability to defenders, and they can find those chains before an attacker does.

Defenders need to fight AI with AI. Signature-based detection has no pattern to match against a script that was invented on the fly and has never existed before. SAST catches what it was trained to recognize, and has no framework for a vulnerability chain an AI assembled in real time from your specific codebase. If AI is generating novel attack paths that human pentesters wouldn't think to try, the only way to find those paths before an attacker does is to run AI against your own application first.

AI makes defenders capable of things that were out of reach before. A single security engineer can now run more than a full red team could, in terms of speed, depth, and coverage. Instead of choosing which parts of the application to test before a release, continuous AI pentesting lets you test all of it, on every deploy, with AI agents running through hundreds of attack paths in parallel and feeding the findings back into the process in real time. Teams can operate at a different scale and shrink the window of time that the application stays untested.

Suit up and go

The Mexico and FortiGate attackers succeeded because they had supercharged AI upgrades. The vulnerabilities are going to be easier for hackers to find, and more bad actors are going to get in on it. Defenders need their own superpower toys to defend against hackers with their own super weapons.

Consider Tony Stark, a genius engineer and strategist in his own right. Equipped with the Iron Man suit and JARVIS, he’s a full superhero. The AI and the suit handle scale while Stark makes the calls. Together, they’re unbeatable.

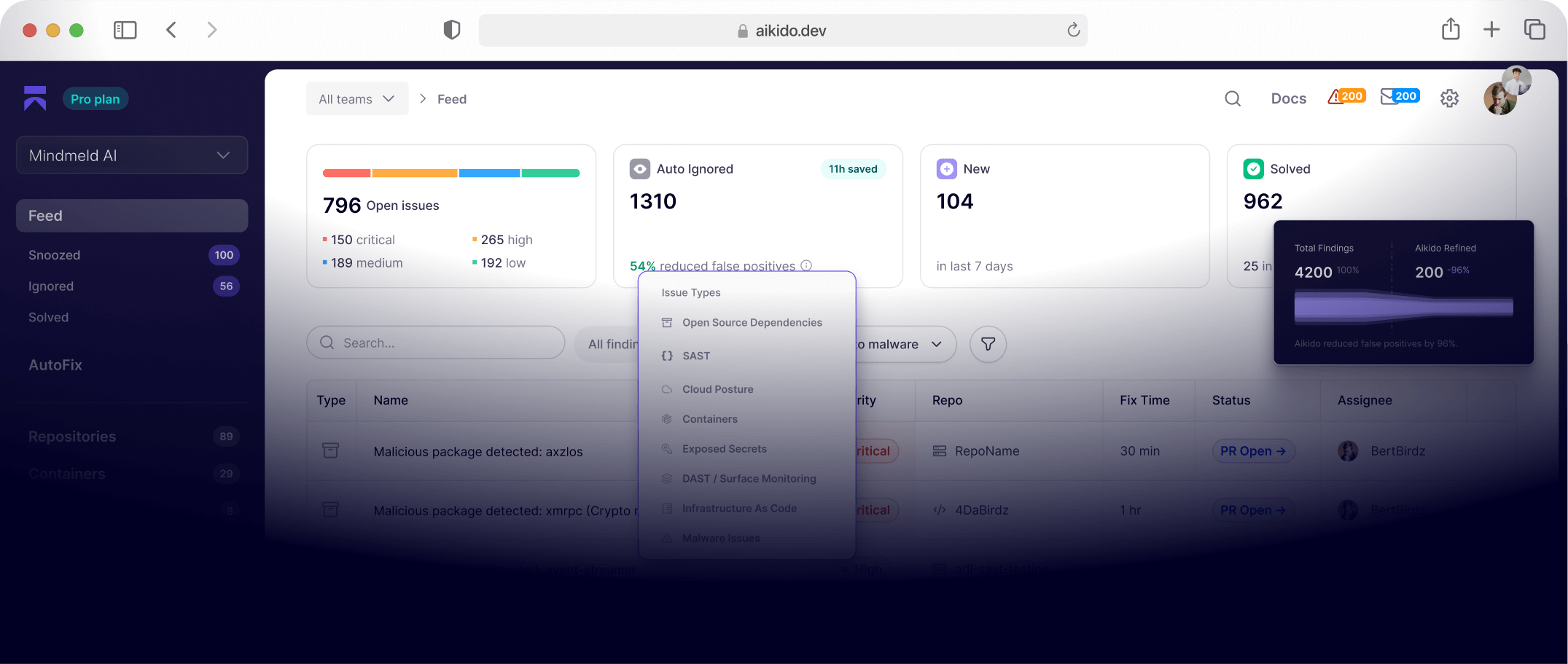

Aikido Infinite is the superpower suit for security teams. Continuous AI pentesting acts like a team of elite hackers dedicated entirely to your application, on every deploy, around the clock. Your best people stop triaging OWASP Top 10 forever and get back to fighting the bigger battles. Read more about how it works in the Infinite product launch post.

Attackers have their suits now. Aikido Infinite is yours. Suit up.