This post is based on Mackenzie's conversation with Noora Ahmed-Moshe on The Secure Disclosure podcast. Listen to the full episode.

A company lost a million dollars because someone on a litigation call ran an AI note-taker. As behavioral scientist Noora Ahmed-Moshe explains on the podcast, the tool summarized a confidential conversation and sent it to the opposing party, who used it to force a settlement on their terms. Legal experts warn that AI-generated transcripts are now routine discovery targets in lawsuits.

Note-takers are just one example. Employees are using AI in more ways than their companies know about, and the reality is, they're being forced to. According to Ahmed-Moshe, it's a fear response. "There's a general sense of panic that AI is going to come and take your job." Over 95% of employers now want to hire people with AI skills. Employees are scared to fall behind on tools because they're correctly reading the job market.

That fear is what's driving shadow AI. Employees are turning to unapproved tools because the technology is moving faster than any approval cycle can track. By the time your IT department has run a POC and granted access, your employees have already heard from a friend about something better. When you give them an approved tool that doesn't fit their workflow and then block everything else, you've just made them hide the real tools they're using.

And hiding is where the breach begins.

Panic is now your attack surface

Studies show more than half of employees are now using unapproved AI tools at work. More than half of those are feeding sensitive company data into tools their company doesn't manage, monitor, or even know about. This means sensitive materials like legal documents, customer data, and internal strategy are all flowing into unvetted models

Even reputable tools on free-tier plans may be using that data to train models. A less reputable tool could be breached outright. And as Ahmed-Moshe pointed out, "I don't think we've really seen the risks materialize yet, because it's so new." The blast radius is still growing, and we've only seen the early returns.

Banning doesn't work

The instinct to ban all unapproved AI use is understandable. Samsung did it with ChatGPT. A security consultant posted on Reddit about a client who demanded the same, then found that their CEO had been using ChatGPT on a personal account for six months to write board decks.

Banning the tools your employees depend on won't make them stop using them. As Ahmed-Moshe puts it, "If you try to ban AI tools in your organization, I don't see how you can succeed as a company anymore." AI tools introduce genuine security risks, but they're an essential part of how we get work done. Banning AI tools is like telling teenagers not to use their phones. They'll just do it where you can't see it.

We've been here before. Shadow IT has existed for years, and BYOD was born when companies realized that blocking personal devices was actually going to stifle productivity. They needed a way to secure them. Shadow AI is the same problem with higher stakes.

How to adapt your security posture to account for shadow AI

The organizations that close this gap are eliminating the conditions that make employees want to hide their tool usage in the first place.

What you can actually do:

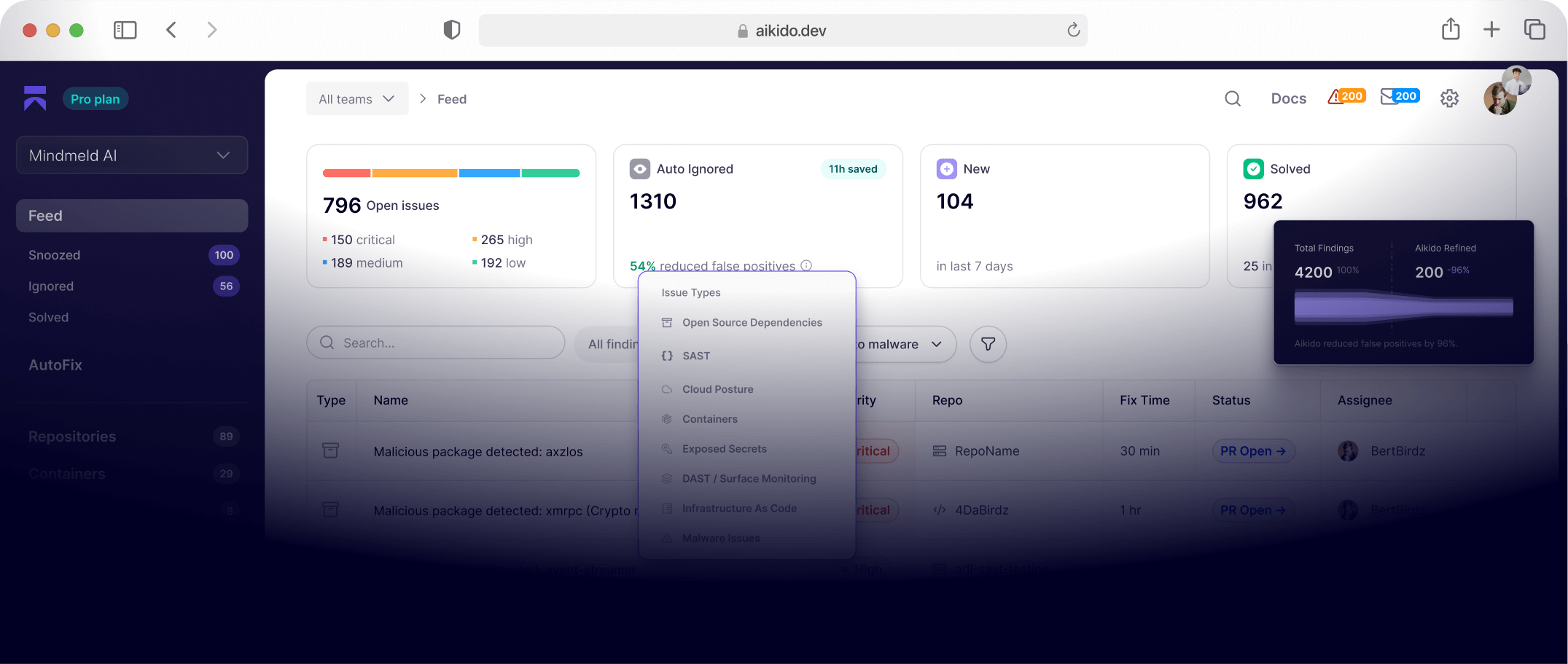

- Get visibility first: Audit what tools are being used before you start blocking anything. Aikido Endpoint monitors every developer device for the threats that sit outside your normal attack surface.

- Understand the workflow gaps: If employees are using unsanctioned tools, there's a reason. Find it and close it with something that actually works.

- Create psychological safety: Open dialogue beats punishment when it comes to changing behavior. Security teams that are approachable get visibility that closed cultures never will.

- Move faster than the approval cycle. The three-month POC-to-deployment process that worked for enterprise software doesn't work when the threat landscape and the tooling are both changing month to month.

Shadow AI is the new phishing, a human behavior problem that technical controls can reduce but never eliminate. The panic is the vulnerability. Address that first.

{{cta}}

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "BlogPosting",

"@id": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse#article",

"mainEntityOfPage": {

"@type": "WebPage",

"@id": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse"

},

"headline": "Shadow AI risks start with fear, and banning makes them worse",

"description": "Employees aren't using unapproved AI tools to cause problems. They're scared of falling behind. Here's why banning shadow AI increases your security risk, and what to do instead.",

"image": {

"@type": "ImageObject",

"@id": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse#primaryimage",

"url": "https://www.aikido.dev/images/blog/shadow-ai-risks.png",

"contentUrl": "https://www.aikido.dev/images/blog/shadow-ai-risks.png",

"width": 1200,

"height": 630

},

"datePublished": "2026-05-12T00:00:00+00:00",

"dateModified": "2026-05-12T00:00:00+00:00",

"author": {

"@type": "Person",

"@id": "https://www.aikido.dev/authors/nicholas-thomson",

"name": "Nicholas Thomson",

"jobTitle": "Senior SEO & Growth Lead",

"worksFor": {

"@type": "Organization",

"name": "Aikido Security",

"url": "https://www.aikido.dev"

},

"sameAs": [

"https://www.linkedin.com/",

"https://x.com/"

]

},

"publisher": {

"@type": "Organization",

"@id": "https://www.aikido.dev#organization",

"name": "Aikido Security",

"url": "https://www.aikido.dev",

"logo": {

"@type": "ImageObject",

"url": "https://www.aikido.dev/logo.png"

}

},

"keywords": [

"shadow AI",

"shadow IT",

"AI security risks",

"unapproved AI tools",

"employee AI usage",

"AI data leakage",

"BYOD security",

"AI note-taker risks",

"security posture",

"attack surface",

"AI governance",

"insider threat",

"enterprise AI security",

"AI compliance",

"behavioral security"

],

"articleSection": "Cybersecurity",

"inLanguage": "en-US",

"timeRequired": "PT5M",

"isBasedOn": {

"@type": "PodcastEpisode",

"name": "AI Panic is Driving Shadow IT with Noora Ahmed-Moshe",

"url": "https://creators.spotify.com/pod/profile/thesecuredisclosure/episodes/AI-Panic-is-Driving-Shadow-IT-w-Noora-Ahmed-Moshe-e3ivlbo",

"partOfSeries": {

"@type": "PodcastSeries",

"name": "Secure Disclosures"

}

},

"about": [

{

"@type": "DefinedTerm",

"name": "Shadow AI",

"description": "The use of unapproved or unsanctioned AI tools by employees within an organization without the knowledge or consent of IT or security teams."

},

{

"@type": "DefinedTerm",

"name": "Shadow IT",

"description": "The use of information technology systems, software, and services without explicit organizational approval."

},

{

"@type": "Thing",

"name": "AI security risk",

"sameAs": "https://schema.org/Thing"

},

{

"@type": "Thing",

"name": "BYOD",

"description": "Bring Your Own Device — a policy allowing employees to use personal devices for work purposes."

}

],

"mentions": [

{

"@type": "Person",

"name": "Noora Ahmed-Moshe",

"jobTitle": "Behavioral Scientist",

"sameAs": "https://www.linkedin.com/in/noora-ahmed-moshe/"

},

{

"@type": "Organization",

"name": "Samsung",

"sameAs": "https://www.samsung.com"

},

{

"@type": "SoftwareApplication",

"name": "ChatGPT",

"applicationCategory": "AI Assistant",

"operatingSystem": "Web",

"sameAs": "https://chat.openai.com"

},

{

"@type": "SoftwareApplication",

"name": "Aikido Endpoint",

"applicationCategory": "Security Software",

"operatingSystem": "Web",

"url": "https://www.aikido.dev/attack/surface-monitoring-dast"

}

],

"citation": [

{

"@type": "WebPage",

"name": "Samsung bans ChatGPT and other generative AI use by staff after leak",

"url": "https://www.bloomberg.com/news/articles/2023-05-02/samsung-bans-chatgpt-and-other-generative-ai-use-by-staff-after-leak"

},

{

"@type": "WebPage",

"name": "Eavesdropping by Algorithm: Legal Risks of AI Meeting Assistants",

"url": "https://www.babstcalland.com/news-article/eavesdropping-by-algorithm-legal-risks-of-ai-meeting-assistants/"

},

{

"@type": "WebPage",

"name": "AI Job Market Report",

"url": "https://zapier.com/blog/ai-job-market-report/"

},

{

"@type": "WebPage",

"name": "Many workers are using unapproved AI tools at work",

"url": "https://www.techradar.com/pro/many-workers-are-using-unapproved-ai-tools-at-work-and-sharing-a-lot-of-private-data-they-really-shouldnt"

}

],

"speakable": {

"@type": "SpeakableSpecification",

"cssSelector": ["h1", "h2", ".article-summary"]

}

},

{

"@type": "WebPage",

"@id": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse",

"url": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse",

"name": "Shadow AI risks start with fear, and banning makes them worse",

"description": "Employees aren't using unapproved AI tools to cause problems. They're scared of falling behind. Here's why banning shadow AI increases your security risk, and what to do instead.",

"isPartOf": {

"@type": "WebSite",

"@id": "https://www.aikido.dev#website",

"url": "https://www.aikido.dev",

"name": "Aikido Security"

},

"primaryImageOfPage": {

"@id": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse#primaryimage"

},

"breadcrumb": {

"@id": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse#breadcrumb"

},

"inLanguage": "en-US"

},

{

"@type": "BreadcrumbList",

"@id": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse#breadcrumb",

"itemListElement": [

{

"@type": "ListItem",

"position": 1,

"name": "Home",

"item": "https://www.aikido.dev"

},

{

"@type": "ListItem",

"position": 2,

"name": "Blog",

"item": "https://www.aikido.dev/blog"

},

{

"@type": "ListItem",

"position": 3,

"name": "Shadow AI risks start with fear, and banning makes them worse",

"item": "https://www.aikido.dev/blog/shadow-ai-risks-start-with-fear-banning-makes-them-worse"

}

]

},

{

"@type": "Organization",

"@id": "https://www.aikido.dev#organization",

"name": "Aikido Security",

"url": "https://www.aikido.dev",

"logo": {

"@type": "ImageObject",

"url": "https://www.aikido.dev/logo.png"

},

"sameAs": [

"https://www.linkedin.com/company/aikido-security",

"https://x.com/aikido_security"

]

},

{

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is shadow AI?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Shadow AI refers to the use of unapproved or unsanctioned AI tools by employees within an organization, without the knowledge or oversight of IT or security teams. It is a subset of shadow IT and poses significant data security and compliance risks."

}

},

{

"@type": "Question",

"name": "Why do employees use shadow AI?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Employees use unapproved AI tools primarily out of fear of falling behind in a job market where AI skills are increasingly required. When approved tools don't fit their workflows, or approval cycles move too slowly, employees turn to tools that help them do their jobs more effectively."

}

},

{

"@type": "Question",

"name": "Why doesn't banning AI tools work?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Banning AI tools forces usage underground rather than eliminating it. Employees will use personal devices or accounts to access the tools they depend on. As with BYOD, the more effective approach is to create a secure, sanctioned framework that meets employees' actual workflow needs."

}

},

{

"@type": "Question",

"name": "What are the security risks of shadow AI?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Shadow AI poses multiple security risks including sensitive data being fed into unvetted models, data used to train third-party AI systems, exposure through breaches of unsecured tools, and AI-generated content such as meeting transcripts becoming discovery targets in legal proceedings."

}

},

{

"@type": "Question",

"name": "How can organizations reduce shadow AI risk?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Organizations should first gain visibility into what tools are being used, understand the workflow gaps driving unsanctioned usage, create psychological safety so employees feel comfortable disclosing tool usage, and accelerate their approval cycles to keep pace with the speed of AI development."

}

}

]

}

]

}

</script>