Preventing total cloud takeover via SSRF attacks

This is the story of an attacker who gained access to a startup’s Amazon S3 buckets, environment variables, and various internal API secrets, all via a simple form that sends an email. Even though this is a true story, I’m keeping the startup’s name a secret.

How they got in

It all starts with a PHP application sending out an email. To load some of the fancy images to the email as attachments, the application needs to download them. In PHP this is easy. You use the function file_get_contents and that is where the fun begins.

Of course, some of the user input for this email was not fully checked or HTML-encoded, and thus a user could include an image such as <img src=’evil.com’/>. Now, in theory, this is not that too bad, but sadly this PHP function is very powerful and can do much more than load images over the internet. It can also read local files and more importantly: files over the local network instead of the Internet.

Instead of evil.com, the attacker entered a special local URL. You can use this URL to get the IAM credentials linked to the role of the AWS EC2 server you're running with a simple GET request.

<img src=’http://169.254.169.254/latest/meta-data/'>

The result was that the attacker got an email that included the IAM credentials for the EC2 server in an attachment in the mailbox. Those keys give the attacker the ability to impersonate that server when talking to various AWS services. It all goes downhill from there...

Why is this even possible in the first place?

The system that loads these IAM keys is called IMDSv1 and Amazon released a new version in 2019 called IMDSv2. With IMDSv2, you need to send a PUT request with a special header to get your IAM credentials. That means a simple GET-based URL loading function like file_get_contents can no longer cause that much damage.

It’s unclear what the adoption of IMDSv2 is as of 2023, but it’s clear Amazon is still taking measures to increase its adoption and we’re seeing IMDSv1 still being used in the wild.

The compromise of the IAM keys leads to further compromises: The S3 buckets could be listed and their contents read. To make matters worse, one of the S3 buckets contained a cloudformation template, which contained sensitive environment variables (eg Sendgrid API keys).

How do I defend my cloud infrastructure against this?

Now, what could be done to prevent this total loss of data? Your developers could be extra careful and take care to use an allowlist for the URLs they pass on to file_get_contents. They could even verify that the content they receive is an image if they are expecting an image. The reality is however that these kinds of mistakes are hard to avoid as a developer.

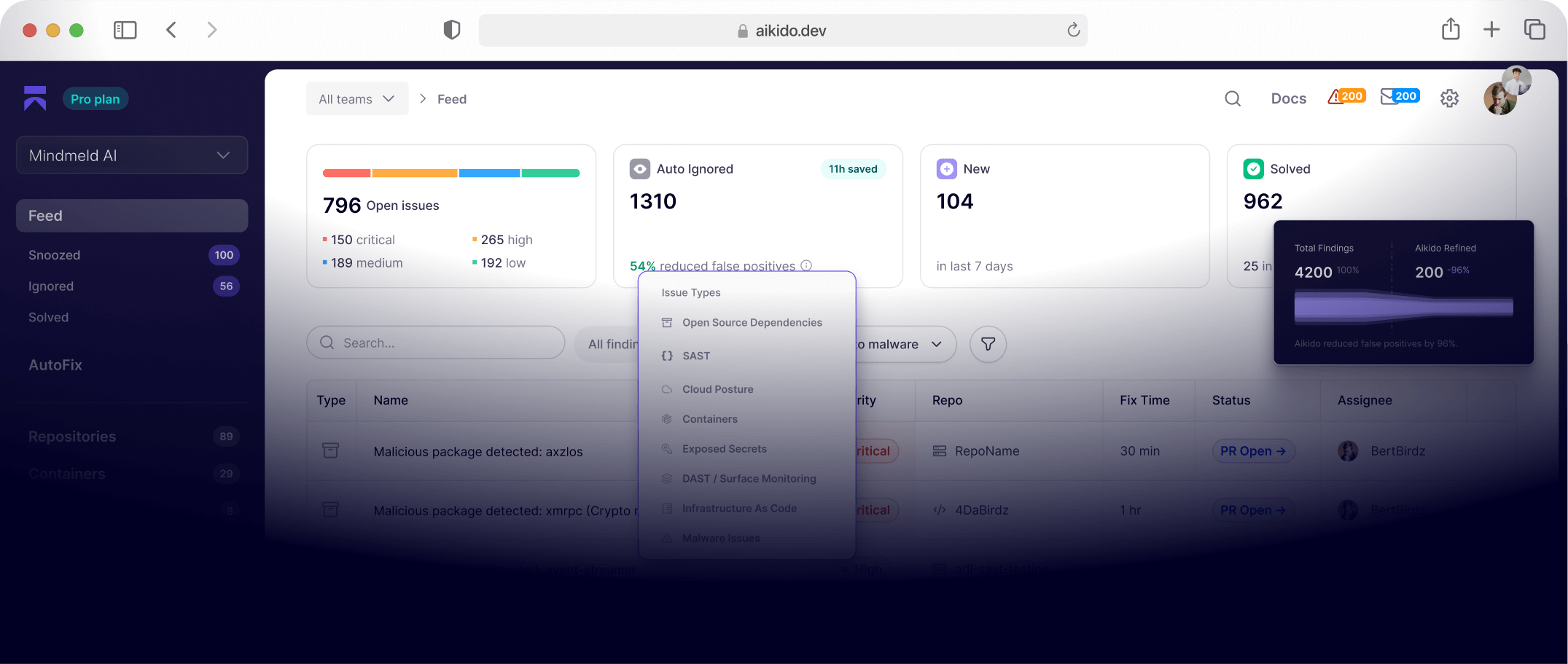

It would be a lot better to ensure your infrastructure has extra defenses against these attacks. Our new integration with AWS inside of Aikido security will alert you if your cloud does not actively take any of the following measures. Each of these measures on its own would have stopped this particular attack, but we recommend implementing all of them. Use our free trial account to see if your cloud is already defended against these threats. See how Aikdido protects your app against vulnerabilities here.

Steps to take:

- Migrate your existing IMDSv1 EC2 nodes to use IMDSv2

- Don’t store any secrets at all in the environment of your webservers or in cloudformation templates. Use a tool such as AWS Secrets Manager to load the secrets at run-time.

- When assigning IAM roles to your EC2 servers, make sure they have extra side conditions such as restricting them to be usable only from within your local AWS Network (your VPC). The example below allows your server to read from S3, but only if the EC2 server is communicating via a specific VPC endpoint. That’s only possible from within your network, so the attacker wouldn’t have been able to get to the S3 buckets from his local machine.

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "rule-example",

"Effect": "Allow",

"Action": "s3:getObject",

"Resource": "arn:aws:s3:::bucketname/*",

"Condition": {

"StringEquals": {

"aws:SourceVpce": "vpce-1s0d54f8e1f5e4fe"

}

}

}

]

}

About “The Kill Chain”

The Kill Chain is a series of real-life stories of attackers getting to the crown jewels of software companies by chaining multiple vulnerabilities. Written by Willem Delbare, leveraging his ten years of experience in building & supporting SaaS startups as a CTO. The tales come directly from Willem’s network and all really happened.