GitHub Actions has been exploited a lot in a lot of supply chain attacks lately, and workflow misconfigurations have played big roles. It's dangerous to go alone! Take this (checklist).

Why are there so many security issues with GitHub Actions?

GitHub Actions, GitHub's built-in CI/CD and automation system, doesn't have inherently weak security, but it sure does have a lot of ways to shoot yourself in the foot.

The platform works as designed, but the defaults are generally set up for convenience and flexibility, not security. pull_request_target exists for a reason, and mutable tags sure are convenient. But those design decisions created attack surface that didn't become obvious until later.

GitHub Actions is the also default CI/CD system for most open source projects, and taking over an open-source project lets hackers into the most downstream victims. Workflows often hold credentials that publish to npm and PyPI, so a compromised workflow can push a malicious version of a package, and every developer who installs that package gets it too.

Yet another reason we keep seeing so many of the same vectors showing up in incidents is because the attack surface has been well-documented in public research for years. Attackers sometimes just scan over repositories looking for these misconfigurations to find easy attack targets (see prt-scan). GitHub is prioritizing stronger protections over the next year to protect users better, but a lot of responsibility still falls on the user to set up the GitHub securely and correctly.

Following all the best practices won't make your workflows bulletproof. While you can't fully protect yourself from a compromised maintainer or a zero-day in GitHub's infrastructure, you can close a lot of the vectors that attackers are actively exploiting lately, and makes your repos a harder target than most.

Best practices to keep your GitHub Actions workflows secure

Start here. If you only do five things from this checklist:

- Pin all third-party actions to a full commit SHA

- Set default

GITHUB_TOKENpermissions to read-only - Never

pull_request_targetin public repos - Never interpolate

${{ github.* }}directly intorun:steps - Use OIDC for cloud credentials instead of long-lived secrets

But we recommend you read the checklist in detail, where we explain all of our GitHub Actions safety advice and why you need them. Check out the tools at the bottom as well, which will help you implement and enforce these best practices.

Trigger configuration

1. Never use pull_request_target in public repos

pull_request_target exists to allow workflows triggered by fork PRs to run with access to base repository secrets. It allows you to automatically label or comment on PRs from outside contributors. It's a nice idea, the access to secrets makes this dangerous.

Unlike the standard pull_request trigger, pull_request_target runs in the context of the base repository regardless of where the PR comes from. Anyone can open a PR against a public repo. If your workflow triggers on that PR, even if it's not merged, any secrets explicitly referenced in the workflow are loaded into the runner environment and become accessible to whatever code executes in that runner. The attacker's script can easily read any of them with an environment lookup like os.environ.get('MY_SECRET') and send them back to an attacker without leaving evidence.

If you need it in a private repo, require PRs from first-time contributors to get an approval from a maintainer before they can trigger any workflows. GitHub supports this natively under Settings > Actions > Fork pull request workflows.

In the wild: The March 2026 Trivy attack came from exploiting pull_request_target. The engineers thought it was safe since it wouldn't be allowed to run anything, but that wasn't sufficient. The PR sent the secrets to the attacker and used them to parade through their accounts. Soon after, an attacker started scanning GitHub specifically for repos with pull_request_target enabled and opened a few hundred PRs across in about a day.

2. Avoid workflow_run in public repos

workflow_run lets you chain workflows so a downstream workflow triggers when an upstream one completes. The problem is that the downstream workflow runs with write permissions and secret access regardless of what triggered the upstream one, including a fork PR from an external contributor.

If an upstream pull_request workflow is vulnerable to script injection, an attacker can poison the output artifact via a PR. The downstream workflow then consumes attacker-controlled content in a privileged context with secret access, so attacker-controlled content from an untrusted PR has now reached a workflow with secret access via an extra hop. The attack path is longer than pull_request_target but reaches the same place.

The best fix is to avoid the pattern. If a deployment only needs to happen on pushes to main, trigger it directly with a push on main rather than chaining it through workflow_run. If you really need workflow_run, check github.event.workflow_run.event before taking any privileged action. If the upstream trigger was a pull_request rather than a push from a maintainer, bail out before deploying or writing anything.

jobs:

deploy:

if: github.event.workflow_run.event == 'push'

zizmor will flag workflow_run workflows that take privileged actions without the event check if you want automated detection across your repo estate.

3. Audit workflows using other privileged triggers

In addition to pull_request_target and workflow_run, watch out for other triggers that run with access to secrets. These include issue_comment, issues, pull_request_review, and pull_request_review_comment. Because they all run with secret access, they can be influenced by external contributors. The same script injection rules apply, which is to never interpolate values from these events directly into run: steps. We talk more about this in the next section.

Handling untrusted input

1. Prevent script injection by treating all branch names, PR titles, commit messages, and issue bodies as untrusted input

This is the same principle as SQL injection. User-controlled values that end up in a shell command get interpreted as code, not data, so never interpolate github.* values directly into run: steps. The fix is to assign the value to an environment variable first, then reference that variable in the shell script:

# vulnerable

- run: echo "Branch is ${{ github.head_ref }}"

# safe

- run: echo "Branch is $BRANCH"

env:

BRANCH: ${{ github.head_ref }}

When the value is assigned to an env var, the shell reads it as a string at runtime rather than interpreting it as syntax at parse time. This applies to anything originating from user-controlled input: github.head_ref, github.event.pull_request.title, github.event.issue.body, github.event.commits[0].message, and similar context values. A good SAST scanner like Aikido will catch this and provide the fix in a pull request.

In the wild: In the Ultralytics attack, an attacker named a branch with a curl command that a workflow interpolated directly into a run: step, executing it as code. The Nx/s1ngularity attack, first caught by Aikido security, combined this with pull_request_target, where a PR to an outdated branch triggered a vulnerable workflow that leaked a GITHUB_TOKEN with read/write permissions, which was then used to publish malicious npm packages.

2. For AI agents in custom workflows, use read-only tokens and keep raw user input out of prompts

AI agents running in GitHub Actions workflows have the same secret access as any other step. If an agent processes issue titles, PR descriptions, or commit messages as part of its prompt, an attacker can put instructions in that text and manipulate the agent into taking privileged actions, like modifying files or exfiltrating data through any tools it can access. Because there's no way to prevent prompt injection in LLMs, don't let AI agents have access beyond reading, and don't allow them to receive raw issue titles, PR descriptions, or commit messages as prompt input.

In the wild: Aikido researchers demonstrated this with PromptPwnd: a malicious issue title fed into a Gemini CLI workflow caused the agent to write repository secrets into a public issue thread using its own gh tool access.

3. Treat LLM-generated output as untrusted input

When a workflow uses an LLM to generate a command, script, or file path and passes that output directly into a run: step, it creates the same injection risk as interpolating a branch name or PR title. LLM output is not guaranteed to be safe, and an attacker who can influence the prompt can influence what gets executed. As we discussed in the earlier item about prompt injection, assign LLM output to an environment variable first, validate it where possible, and never pipe it directly into a shell command.

4. Never write untrusted data to GITHUB_ENV or GITHUB_PATH

Writing to GITHUB_ENV sets environment variables for all subsequent steps in the job. Writing to GITHUB_PATH prepends entries to the system PATH for all subsequent steps. If untrusted content reaches either file, an attacker can set arbitrary environment variables, like NODE_OPTIONS that trigger code execution. They could also inject a malware binary at the front of the PATH that gets called in place of a trusted tool. The vulnerable pattern is a workflow that downloads an artifact or reads user-controlled input and writes it directly to $GITHUB_ENV without sanitization. Treat anything written to these files with the same caution as a run: step.

Artifact handling

5. Extract artifacts to a temporary directory like /tmp rather than the workspace, to avoid overwriting workflow files

Extracting an artifact directly into the workspace could allow an archive with malicious content to overwrite workflow files, scripts, or tooling that subsequent steps depend on. Extracting to /tmp or another isolated directory keeps artifact contents away from anything your workflow treats as trusted. GitHub also supports SHA256 digest verification if you need stronger integrity guarantees.

6. Exclude secret files (.env, config files, credentials) from uploaded artifacts and avoid path: . patterns that sweep up everything

The path: . pattern in an actions/upload-artifact step uploads everything in the working directory, which may include .env files, config files with embedded credentials, or cached secrets written to disk by other steps. Be explicit about what you're uploading so you don't accidentally publish your credentials to the internet. List specific directories or file types rather than sweeping up the entire workspace, and add .env, *.pem, and similar files to your .gitignore and artifact exclusion patterns.

Mutable action references

7. Pin all third-party actions to a full commit SHA, not a tag or branch

Tags are useful as a shorthand, but the tradeoff is that it's not static. Tags and branches are mutable, which means a repo owner can repoint them to a different commit at any time, and it's expected with tags like @main. When referencing a specific version with uses: some-action@v3, we expect the commit to stay the same. But if the action maintainer's account is compromised, an attacker can repoint that tag to a malicious commit and every downstream workflow picks it up on the next run without a PR or other indication. To prevent this, the best practice is to pin to the full commit SHA instead: uses: some-action@abc123def456..... This of course can be a bit annoying to deal with, so you can use Dependabot, Renovate, or pinact to keep pinned SHAs up to date. Native lockfiles are in the GHA roadmap, so hopefully it's available by 2027.

In the wild: In March 2025, attackers repointed 76 of 77 version tags in aquasecurity/trivy-action to malicious commits containing an infostealer. Every workflow referencing those tags by name pulled in the malicious code automatically.

8. Vet third-party actions before adoption

Before adding a uses: line, spend two minutes on the action's repo. Check whether the creator is GitHub-verified, when the repo was last maintained, how many contributors it has, and what its OpenSSF Scorecard score is. A widely-used action from an unverified single maintainer with no recent activity is a high-value target for account takeover.

9. Prefer actions with fewer transitive dependencies

More dependencies leave you more vulnerable to supply chain attacks, so choose actions with fewer dependencies when there are options. An action can itself reference other actions, and those transitive dependencies are resolved at runtime. SHA-pinning your direct dependency doesn't protect you if it pulls in its own dependencies mutably.

In the wild: The tj-actions compromise propagated partly through this mechanism. Downstream workflows pinned to tj-actions/changed-files, but changed-files transitively referenced reviewdog/action-setup by mutable tag, so when reviewdog was compromised, every downstream pipeline ran the malicious code.

Mutable package dependencies

10. Pin npm and PyPI package versions explicitly

Floating version ranges like ^1.2.0 or >=2.0.0 mean your workflow installs whatever the latest matching version is at runtime. If a package is compromised and a new malicious version is published within your range, your workflow pulls it in automatically on the next run. Pin to exact versions (1.2.3) so your workflow only ever installs what you explicitly chose. Don't float on range.

In the wild: The Ultralytics attack demonstrated how a compromised package version published to PyPI can reach workflows that aren't pinned. The first malicious release was live for hours before detection, long enough to affect builds pulling in floating dependencies.

11. Set minimum release age where your package manager supports it (pnpm, yarn)

Even with pinned versions, a newly published malicious package can sit at an exact version you later choose to upgrade to. Minimum release age settings tell your package manager to refuse packages published less than a specified time ago, typically 72 hours, giving the community time to detect and report malicious releases before they reach your builds. pnpm and yarn support this natively, but npm does not yet. Aikido Safe Chain can cover this for npm (see below).

12. Verify package provenance using attestations where available

Some package registries now support provenance attestations, where cryptographic records linking a published package to the specific source commit and build pipeline that produced it. Verifying attestations before installing a package confirms it was built from the source it claims. npm supports this for packages published via GitHub Actions. This is still a newer practice and tooling support is not fully there, but it's worth enabling where your registry and package manager support it.

Secrets handling

13. Reference secrets through environment variables, never command-line arguments

Command-line arguments are visible in process listings, so other processes on the runner with access to /proc can read them. Passing a secret as an env var keeps it out of the process table. In your workflow, set the secret in an env: block and reference $SECRET_NAME in the shell command rather than ${{ secrets.MY_SECRET }} inline.

In the wild: tj-actions exfiltrated secrets by printing them to the log (the echo/masking issue), and the Trivy attack resulted in a stolen PAT. Secrets handling is about limiting access to secrets throughout the system so that when something goes wrong, an attacker can't walk away with working credentials.

14. Scope secrets at the repo level to specific GitHub Environments where possible

GitHub Environments let you gate secrets behind deployment protection rules, so a secret like PROD_DB_PASSWORD is only accessible to workflows targeting the production environment. Without this, any workflow in the repo can read any repo-level secret. You can set this up under Settings > Environments.

15. Scope secrets in the workflow at the step level, not the job level

Scoping secrets to the individual steps of a job that needs them applies the principle of least privilege to limit the blast radius if an action is compromised. A secret declared in a job-level env: block is readable by every step in that job, including third-party actions.

In the wild: In the Shai-Hulud attack, tokens with workflow scope were reused across victims. A narrower scope would have contained the blast radius.

16. Use OIDC to obtain short-lived cloud credentials instead of long-lived static secrets where the cloud provider supports it (AWS, Azure, GCP)

Static cloud credentials stored as GitHub secrets are valid indefinitely, so if they're stolen, the window for damage is wide open. OIDC lets your workflow request a short-lived token from AWS, Azure, or GCP directly, scoped to the job and valid for minutes, so there are no credentials for an attacker to steal. AWS, Azure, and GCP all support this natively. Setting this up involves configuring a trust relationship between your cloud provider and GitHub's OIDC endpoint.

17. Require human approval on workflow runs that use production environments

Configure your GitHub Environment to require that someone reviews a workflow before it can access an environment's secrets or deploy to it. This is more realistic for smaller teams or those that ship infrequently. For larger teams, the more scalable version of this is OIDC with short-lived credentials.

18. Avoid printing or echoing secret values, even in debug output

GitHub masks known secret values in logs, but only for exact matches, so if a secret gets base64-encoded or split across two echo calls, the masking doesn't work. The safest rule is to just never echo secrets at all. If you need to verify that a secret is set, check for a non-empty string rather than printing the value.

Runners

19. Do not use self-hosted runners on public repos

Anyone can open a PR against a public repo, which means anyone can potentially trigger a workflow run. GitHub's default approval settings for first-time contributors mitigate this on GitHub-hosted runners, where the environment is ephemeral and isolated anyway. On a self-hosted runner, a misconfigured or overly permissive approval setting means that same PR triggers code execution on your infrastructure. GitHub recommends using GitHub-hosted runners for public repos, and tightening the approval policy to "require approval for all outside collaborators."

In the wild: Researchers executed a supply chain attack on PyTorch by submitting a trivial PR that triggered a workflow on a self-hosted runner, gaining root access to the machine.

20. Use ephemeral runners instead of static persistent ones

A static runner carries state between jobs, which means a compromised job can leave malicious files, modified binaries, or poisoned caches that affect the following runs on that machine. Ephemeral runners start clean and are destroyed after each job. Use Actions Runner Controller for Kubernetes-based setups, or pass the --ephemeral flag when registering a runner manually.

In the wild: Shai-Hulud registered persistent self-hosted runners to compromised repos and used them as a persistent C2 channel, invisible to network monitoring because all traffic flowed through github.com.

21. Restrict runner network egress to an allowlist

A compromised workflow's most valuable action is often exfiltrating secrets to an attacker-controlled server. Restricting outbound network access to only the domains your workflow actually needs makes that significantly harder. Harden-Runner and bullfrog both work on GitHub-hosted runners. For self-hosted runners, firewall rules at the network level accomplish the same thing.

If you're on GitHub Enterprise with self-hosted runners, token IP allowlists add a control in the other direction. It restricts which IPs can use a token at all, so a stolen token can't be used from an attacker's infrastructure.

Token permissions

22. Set default GITHUB_TOKEN permissions to read-only at the org or repo level

The GITHUB_TOKEN is automatically available in every workflow run. By default, it has extensive read and write access across the repository, so any compromised workflow can write to your repo, create releases, or approve PRs. Set the default to read-only under Settings > Actions > General, then grant write permissions explicitly only where needed.

23. Declare explicit permissions: blocks at the workflow or job level to constrain individual jobs

Setting a read-only default at the org level creates the default, but some jobs of course need more permissions. When a job needs elevated access, declare a permissions: block at the job level rather than the workflow level. That way, the elevated token is scoped to only that job, and every other job in the workflow stays read-only.

jobs:

deploy:

permissions:

contents: read

id-token: writeIn the wild: The tj-actions, Trivy, and prt-scan attacks all benefited from tokens having more access than necessary. Tightening token permissions reduces blast radius all around.

Org and repo settings

24. Disable the ability for Actions to approve PRs

By default, GitHub allows workflows to approve pull requests using the GITHUB_TOKEN. This means a compromised workflow could approve its own malicious changes without going through any review process. Disable this under Settings > Actions > General > "Allow GitHub Actions to create and approve pull requests." (Some teams leave this on for Dependabot auto-merge, but it's better to give Dependabot a dedicated bot account with explicit reviewer permissions rather than enabling workflow approval for the whole org.)

25. Restrict Action sources to verified creators or specific repos

The default setting in GitHub allows any action published to the GitHub Marketplace to be used in your workflows. Restricting sources to verified creators or a specific allowlist means a newly published malicious action can't be adopted into your workflows without explicit approval. Instead, developers should request approval from whoever owns your GitHub org settings (usually the platform or DevOps team), who can add it to the allowlist. Configure this under Settings > Actions > General > "Allow actions and reusable workflows."

In the wild: The tj-actions compromise affected 23,000 repos partly because teams had no restriction on which actions they could pull in.

26. Monitor for unexpected self-hosted runner registrations and newly created public repositories in your org

A stolen token lets an attacker register a rogue runner to plant a persistent backdoor. Attackers also routinely create public repos to dump stolen credentials. Both runner registration and repo creation are events your team needs to see immediately, but GitHub doesn't alert on them by default. The workaround is to monitor it manually. Stream your org audit log to a SIEM and alert on self_hosted_runners.register events and repository creation. Without a SIEM, you can poll the audit log via the GitHub API or check manually under Settings > Audit Log.

In the wild: In the Shai-Hulud attack, compromised machines were registered as self-hosted runners named SHA1HULUD, and stolen credentials were used to create public repositories used as exfiltration buckets. Monitoring for both would have flagged the campaign early.

27. Use CODEOWNERS to require security-aware review for changes to .github/workflows/

Workflow files are code that runs with access to your secrets and tokens. Without explicit ownership rules, any developer with repo write access can modify a workflow and merge it without security review. Add a CODEOWNERS entry pointing .github/workflows/ to a reviewer or team that understands the security considerations, and combine it with branch protection rules that require CODEOWNERS approval before merge.

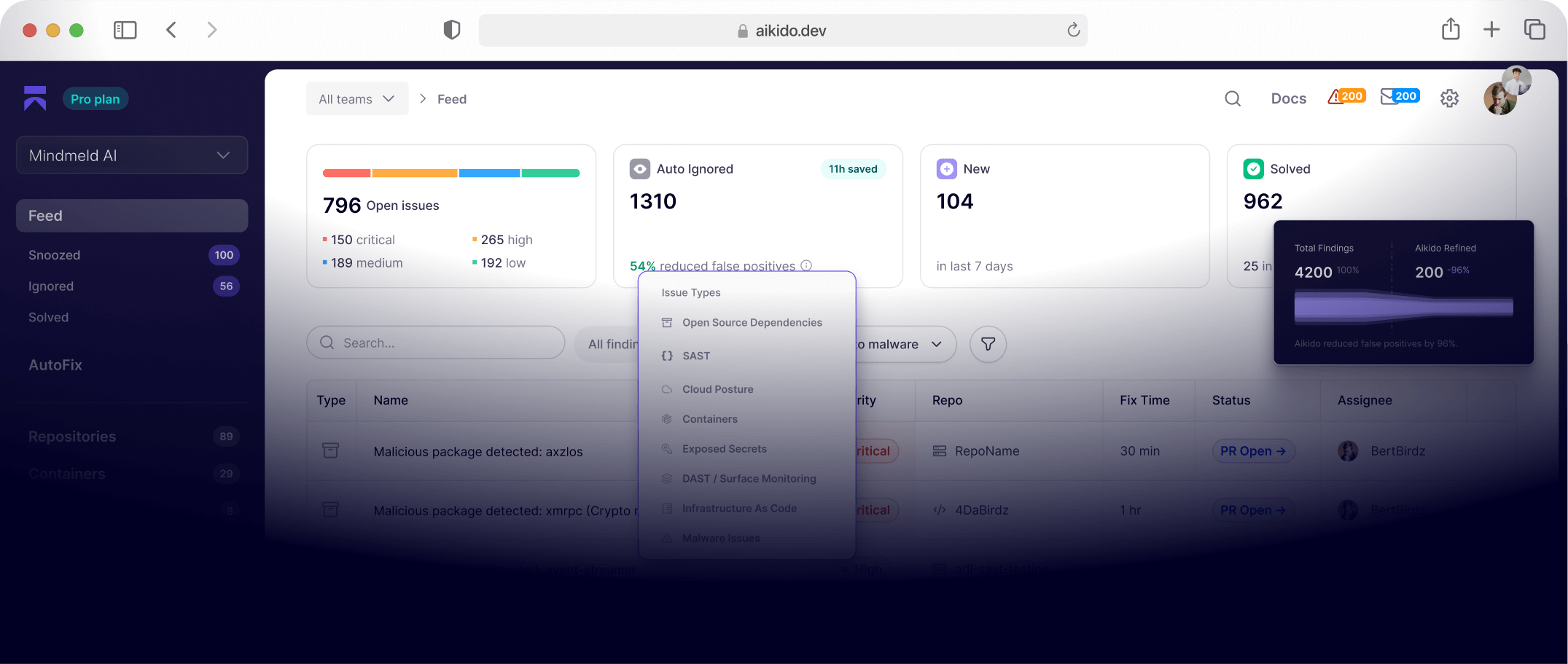

Protect GitHub Actions workflows with Aikido

Aikido detects insecure GitHub Actions workflows and helps developers fix them before they get exploited.

It catches issues like:

- Unsafe use of

pull_request_target - Script injection through untrusted

github.*input - Template and prompt injection in AI-powered workflows

- Unpinned third-party actions

- Overprivileged

GITHUB_TOKENpermissions - Risky secret handling in workflows

Aikido can also:

- Automatically create fix PRs

- Detect unsafe workflow patterns across repositories

- Flag mutable GitHub Action references that should be pinned to a full commit SHA

Aikido Safe Chain helps protect package installs by:

- Blocking known malicious npm and PyPI packages

- Enforcing minimum package age policies

For example, Aikido detects unsanitized user input in run: steps and suggests the safer environment variable pattern automatically.

Ready to secure GitHub Actions workflows

Keep this checklist handy as your work on securing GitHub Actions workflows. Try to set up the items that you can do that takes only a few minutes soon, and share this with your teammates so everyone is on board with the changes.

FAQ: GitHub Actions security

What is the most common way GitHub Actions workflows get exploited?

Script injection is the most frequent vector. Attackers put malicious code in branch names, PR titles, or issue bodies, and workflows that interpolate those values directly into run: steps execute them as shell commands. Pinning actions to commit SHAs and using environment variables instead of inline interpolation closes most of this surface.

What is pull_request_target and why is it dangerous?

pull_request_target is a workflow trigger that runs with access to base repository secrets, even when the triggering PR comes from a fork. Because anyone can open a PR against a public repo, any workflow using this trigger exposes its secrets to external contributors. Avoid it entirely on public repos.

What does "pin actions to a commit SHA" mean and why does it matter?

Most workflows reference third-party actions by tag, like uses: some-action@v3. Tags are mutable, so if an action maintainer's account gets compromised, an attacker can silently repoint that tag to malicious code. Pinning to a full commit SHA like uses: some-action@abc123... means your workflow only ever runs exactly what you reviewed.

Should I use self-hosted runners on public repositories?

Nope. Anyone can open a PR against a public repo and trigger a workflow. On GitHub-hosted runners that's manageable because the environment is ephemeral and isolated. On a self-hosted runner, a misconfigured approval policy means external contributors can execute code directly on your infrastructure.

What is OIDC and why is it better than storing cloud credentials as secrets?

Static credentials stored as GitHub secrets are valid indefinitely, so a stolen secret stays dangerous long after the breach. OIDC lets your workflow request a short-lived token from AWS, Azure, or GCP scoped to that specific job, valid for minutes. There are no long-lived credentials to steal.