Large engineering organizations like to believe their biggest problems are technical. If only someone would approve the budget for the latest tool, everything would be solved. Lately, the prevailing bet is that the silver bullet is vibe coding powered by your favorite flavor of LLM. But the pathologies of large organizations are rarely technical in nature.

In my experience, they are process-oriented problems and can manifest at two different ends of the spectrum. On one end you have teams caught in analysis paralysis, endlessly cycling through meetings, reviews, and consensus-driven design with little to show for it. On the other end, you have the “leap before you look” folks with an execution bias that leads to a lot of self-inflicted wounds due to a lack of introspection.

Somewhere around the moment an organization crosses 5,000 active committers, the nature of security transformation changes entirely. Tooling stops being the limiting factor. Budget stops being the limiting factor. Even talent stops being the limiting factor. What becomes scarce is alignment.

The reality is that by the time a company reaches this size, it already has a functioning security posture, a defined risk tolerance, and a security organization built roughly along familiar staffing ratios. A common pattern is one security professional for every 100 engineers, meaning a company with 5,000 developers likely has a 50-person security team attempting to influence thousands of software decisions per day. The question is not whether security exists. The question is whether it scales.

This article outlines the lessons learned from rolling out developer security programs inside organizations of this scale and why the path to success looks very different from what most CISOs expect.

Why developer security rollouts fail at enterprise scale

Most security rollouts fail for a surprisingly simple reason: they are designed like software deployments instead of cultural changes. Security leaders often begin with the assumption that the right combination of tools (e.g., SAST, SCA, container scanning, secrets detection) will produce improved outcomes if deployed widely enough. But tooling adoption is the easy part.

The hard part is prioritization inside engineering organizations. Developers already have more work than they can complete in a sprint. Product deadlines dominate backlog prioritization. Feature velocity is visible and rewarded. Security fixes often appear abstract and externally imposed. When a security program lands inside that environment, the default response is predictable: security tickets get created, they enter the backlog, and they quietly accumulate.

Nothing about scanning coverage changes this reality. In fact, the more findings you surface without clear ownership, the worse the situation becomes. Security teams often believe they are reducing risk by increasing visibility, but in practice they are sometimes amplifying unmanaged risk. Because everyone knows the problems exist, but nobody really owns them.

The hard constraints every 5,000+ engineer organization faces

Large engineering organizations operate under a set of structural constraints that smaller companies rarely encounter. First, there is SDLC fragmentation. A company with thousands of engineers almost certainly runs multiple development lifecycles simultaneously. Some teams deploy daily. Others deploy quarterly. Some rely on legacy systems built a decade ago that everyone is afraid to touch for fear that they will stop working entirely and become their personal problem. Second, there is technology heterogeneity. A typical enterprise environment may include dozens of programming languages, multiple CI/CD systems, several infrastructure platforms, and a mix of cloud-native and legacy workflows.

Security tooling that works elegantly in one environment may be nearly impossible to deploy in another. Third, there is limited central enforcement power and no single system that captures the full scope of an organization's application and cloud attack surface.

Even if the CISO technically reports to the CIO or CTO, security teams rarely control the backlog priorities of product engineering groups. They influence them, but they do not own them. This creates a fundamental tension.

And the most dangerous security problems are often the ones that require architectural change, dependency upgrades, or major refactoring. The kinds of work engineers instinctively avoid because they do not fit neatly inside two-week sprint planning.

Tasks estimated above roughly 13 story points often get deferred indefinitely. These are projects masquerading as tickets. The result is a growing backlog of security work that everyone understands intellectually but no one feels empowered or incentivized to prioritize.

The first goal is ownership, not coverage

One of the most common mistakes security leaders make is assuming that tool coverage equals progress. Running scanners across every repository may generate impressive dashboards, but without ownership those dashboards become little more than risk catalogs. Real progress begins with a different question:

Who is responsible for fixing what?

Security programs that scale begin by identifying influencers inside the engineering organization. Not the formal managers. Not the architects on the org chart. But the developers whose opinions actually shape engineering behavior. Every large engineering organization has them. These are the culture-carrying engineers and developers others consult before making architectural decisions. A surprisingly effective approach is a training-of-trainers model:

- Identify 10 master developers respected across the organization

- Train them deeply in secure development practices

- Have them train 100 engineering champions

- Those champions influence their own teams of 50 developers each

Within a few weeks, security practices propagate across thousands of engineers without requiring the security team to directly train everyone. At scale, influence spreads through peer credibility.

How CISOs choose pilot teams (and why most pilots mislead)

Most enterprise security programs begin with a pilot. This is sensible in theory. But the way pilots are chosen often guarantees misleading results.

Security leaders frequently select one of two kinds of teams:

- The most mature engineering team

- The team most eager to participate

Both produce artificially positive outcomes. High-performing teams already maintain strong engineering hygiene. Their dependency updates are current. Their CI/CD pipelines are modern. Their architecture is actively maintained. Deploying security tooling there produces impressive metrics, but also a dangerous illusion that rollouts will scale smoothly. The reality appears later. When the rollout reaches legacy services, understaffed teams, or systems that have not been meaningfully updated in years.

A better pilot selection approach intentionally includes representative complexity:

- A legacy service

- A modern cloud-native team

- A high-velocity product group

- A slow-moving internal platform

The goal of a pilot is to discover friction early.

The four-phase rollout model that works at scale

Successful developer security programs tend to follow a consistent rollout pattern. It rarely happens intentionally, but experienced CISOs eventually converge on the same model.

Phase 1: Visibility without enforcement

The first phase focuses on observability. Security tools run across repositories and infrastructure, but they do not block builds or deployments. Findings are surfaced, categorized, and analyzed. This stage helps security teams answer critical questions:

- Which vulnerabilities appear most frequently?

- Which teams respond quickly?

- Which types of fixes create the most friction?

Treat it as a learning exercise.

Phase 2: Developer feedback loops

Next comes developer engagement. Findings are presented in ways that engineers can actually act upon. False positives are aggressively removed. Documentation improves. Remediation examples are shared. This phase also introduces intrinsic motivation. Developers rarely respond well to top-down enforcement. But they do respond to problem-solving challenges. Some organizations successfully gamify remediation work, allowing teams to compete based on the number of security issues resolved per sprint. When engineers begin fixing issues voluntarily, you know the program is starting to work.

{{false-positives}}

Phase 3: Guardrails and Policy

Only after developers trust the system do enforcement mechanisms appear. These usually take the form of guardrails. Examples include:

- Blocking critical vulnerabilities in new dependencies

- Preventing secrets from entering repositories

- Enforcing minimum patch levels for base images

The emphasis remains on preventing new risk, rather than punishing historical debt. The “why” needs to be there alongside the “what” so that we’re not just playing an advanced or accelerated version of whack-a-mole with vulnerabilities and misconfigurations.

Phase 4: Executive accountability

The final phase introduces leadership visibility. Metrics appear in engineering leadership dashboards:

- Time-to-remediation

- Recurring vulnerability categories

- Security backlog trends

At this point, security becomes part of engineering performance discussions. And that is when the cultural shift becomes palpable and durable.

What not to enforce early (and why)

The fastest way to derail a security rollout is premature enforcement. Common early mistakes include:

- Blocking builds on vulnerability thresholds

- Mandating universal patch deadlines

- Enforcing global severity policies

These actions feel decisive, but they often create a backlash. When engineers suddenly find their deployments blocked by tools they did not ask for, they quickly discover workarounds. They disable scanners and fork pipelines. They ignore alerts. The result is worse security and a damaged relationship between engineering and security teams. Adoption must come before enforcement. Trust must come before control.

The metrics that signal real adoption

Security dashboards often focus on the wrong numbers. Counts of vulnerabilities, scans completed, or repositories analyzed provide visibility but say little about behavioral change.

More meaningful indicators include:

- Fix rate: Are developers actually resolving findings? A rising remediation rate usually signals growing engagement.

- Time to remediation: How quickly are high-severity issues fixed? Organizations with healthy developer security cultures often see critical fixes resolved within days, not weeks.

- Recurring findings: Are the same vulnerabilities appearing repeatedly? If so, the problem is not remediation. It is developer education or architectural patterns.

- Disengagement signals: The earliest and most important warning sign of failure is developers ignoring the system, closing tickets without fixes or links to code commits, alert fatigue complaints, and sudden drops in remediation activity.

When a security program rollout is failing

Even well-designed rollouts encounter turbulence. Experienced security leaders recognize the warning signs early:

- Backlogs growing faster than fixes

- Engineers bypassing scanning tools

- Security champions losing influence

When this happens, the instinct is often to tighten enforcement, but that is almost always the wrong move. Instead, successful CISOs pause and ask three questions:

- Are we surfacing too many issues at once?

- Are developers given clear remediation guidance?

- Are we prioritizing the vulnerabilities that actually matter?

The last question leads to one of the most important principles in large-scale security programs:

The Pareto Principle applies here: in most environments, roughly 20% of vulnerabilities account for 80% of real organizational risk. Security programs that focus on those high-impact issues dramatically reduce risk while minimizing developer friction. Programs that attempt to fix everything simultaneously collapse under their own weight.

Embedding security thinking into the SDLC

A long-term developer security program ultimately moves upstream. Instead of reacting to vulnerability reports, organizations begin preventing them during design and development.

One of the most effective tools for this transformation is threat modeling. Many developers encounter security only when a scanner flags an issue. They learn the rule but not the reasoning. Threat modeling changes that dynamic.

It helps developers understand:

- Why session storage decisions matter

- How authentication patterns create attack surfaces

- Why OWASP Top 10 issues appear repeatedly

When engineers understand the “why”, they stop seeing security fixes as external demands and they begin designing systems that avoid those problems entirely. Pairing junior developers with experienced engineers accelerates this learning even further. Senior developers naturally pass down habits such as documentation discipline, automated testing, and secure infrastructure configuration. Security becomes less about scanning code and more about how engineers think while writing it.

The one rule that determines success at scale

After watching dozens of developer security programs succeed or fail, one principle consistently determines the outcome: security must reduce developer cognitive load, not increase it.

If tools generate overwhelming noise, engineers disengage. If remediation guidance is unclear, engineers postpone fixes. If enforcement arrives before trust, engineers bypass controls. But when security tools:

- surface actionable findings

- prioritize meaningful risk

- integrate into existing workflows

developers respond the way they always do and they solve the problem.

And when enough of them begin solving it, something remarkable happens. Security becomes a habit.

And in an organization with 5,000 committers, habits are what ultimately determine the security posture of the entire company.

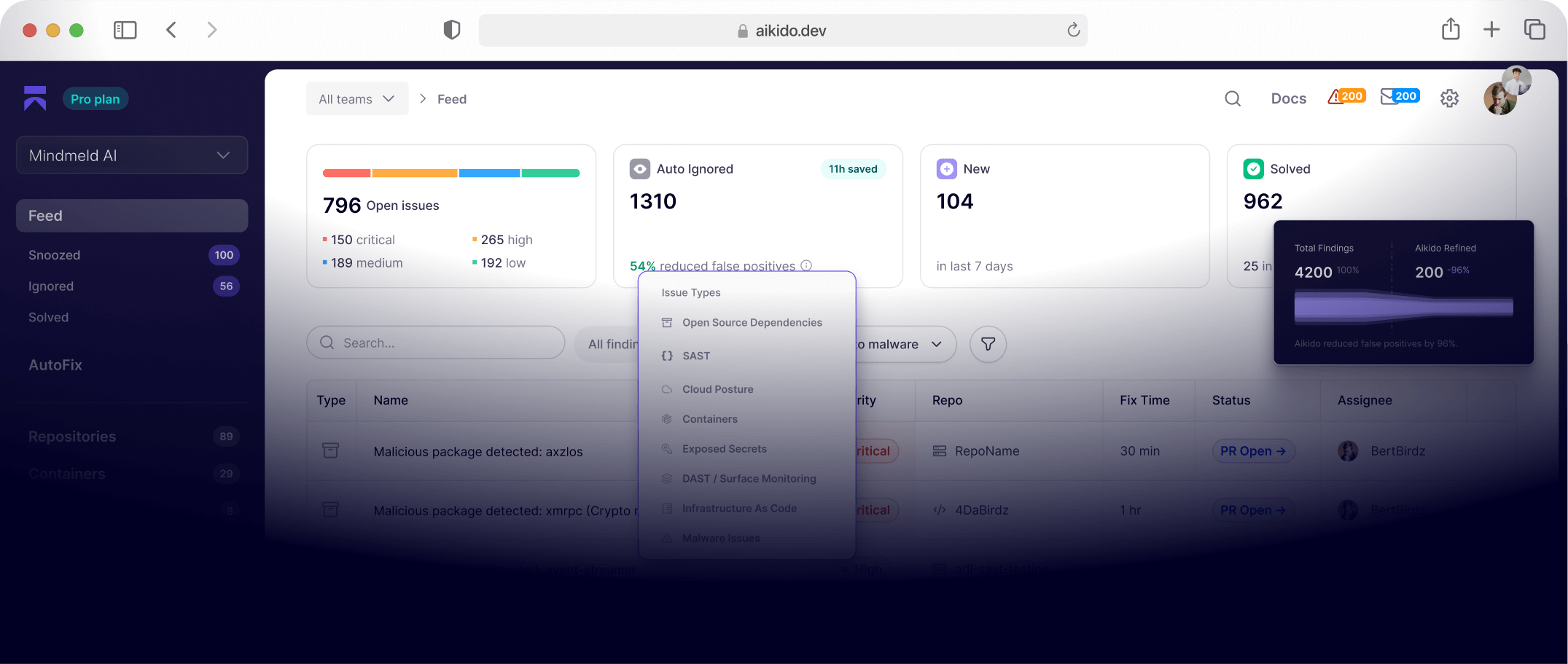

These lessons heavily influenced the design philosophy behind modern developer security platforms like Aikido. A system built to surface meaningful risk while minimizing the cognitive burden on developers.

{{walkthrough}}

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "WebPage",

"@id": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization#webpage",

"url": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization",

"name": "Rolling Out Developer Security in a 5,000+ Engineer Organization",

"description": "A practitioner's guide by enterprise CISO Mike Wilkes on why developer security rollouts fail at scale and how to fix them. Covers the structural constraints of large engineering organizations, a four-phase rollout model, pilot team selection, security ownership strategy, metrics that signal real adoption, and how to embed security thinking into the SDLC through threat modeling and training-of-trainers programs.",

"inLanguage": "en-US",

"isPartOf": {

"@type": "WebSite",

"@id": "https://www.aikido.dev#website",

"url": "https://www.aikido.dev",

"name": "Aikido Security",

"publisher": {

"@id": "https://www.aikido.dev#organization"

}

},

"breadcrumb": {

"@id": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization#breadcrumb"

},

"mainEntity": {

"@id": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization#article"

},

"speakable": {

"@type": "SpeakableSpecification",

"cssSelector": ["h1", "h2", ".article-intro"]

}

},

{

"@type": "BreadcrumbList",

"@id": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization#breadcrumb",

"itemListElement": [

{

"@type": "ListItem",

"position": 1,

"name": "Home",

"item": "https://www.aikido.dev"

},

{

"@type": "ListItem",

"position": 2,

"name": "Blog",

"item": "https://www.aikido.dev/blog"

},

{

"@type": "ListItem",

"position": 3,

"name": "Rolling Out Developer Security in a 5,000+ Engineer Organization",

"item": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization"

}

]

},

{

"@type": "TechArticle",

"@id": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization#article",

"mainEntityOfPage": {

"@id": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization#webpage"

},

"headline": "Rolling Out Developer Security in a 5,000+ Engineer Organization",

"alternativeHeadline": "Why Developer Security Rollouts Fail at Enterprise Scale and How to Fix Them",

"description": "Enterprise CISO Mike Wilkes argues that developer security rollouts fail not because of tooling gaps but because they are designed like software deployments instead of cultural changes. At organizations with 5,000 or more active committers, the limiting factor is alignment, not budget or talent. This guide covers the structural constraints unique to large engineering organizations — including SDLC fragmentation, technology heterogeneity, and the absence of central enforcement power — and presents a four-phase rollout model moving from visibility without enforcement, through developer feedback loops and guardrails, to executive accountability. It introduces a training-of-trainers approach to propagating security culture through peer credibility rather than mandates, explains how pilot team selection typically misleads security leaders, and outlines the metrics that distinguish genuine behavioral adoption from dashboard noise. The article closes with guidance on embedding threat modeling into the SDLC to shift security upstream from reactive scanning to proactive design.",

"url": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization",

"datePublished": "2026-05-06T00:00:00+00:00",

"dateModified": "2026-05-06T00:00:00+00:00",

"inLanguage": "en-US",

"author": {

"@id": "https://www.aikido.dev/authors/nicholas-thomson#person"

},

"publisher": {

"@id": "https://www.aikido.dev#organization"

},

"image": {

"@type": "ImageObject",

"@id": "https://www.aikido.dev/blog/rolling-out-developer-security-5000-engineer-organization#image",

"url": "https://www.aikido.dev/images/blog/rolling-out-developer-security-5000-engineer-organization.png",

"width": 1200,

"height": 630

},

"articleSection": "Developer Security",

"timeRequired": "PT12M",

"proficiencyLevel": "Expert",

"keywords": [

"developer security rollout",

"enterprise AppSec",

"CISO strategy",

"security program at scale",

"SDLC security",

"security culture",

"DevSecOps",

"security ownership",

"training-of-trainers security",

"security champions program",

"vulnerability prioritization",

"Pareto principle security",

"threat modeling SDLC",

"developer cognitive load",

"security backlog management",

"SAST SCA deployment",

"secrets detection",

"container scanning enterprise",

"security pilot teams",

"premature enforcement anti-pattern"

],

"about": [

{

"@type": "Thing",

"name": "Developer Security Programs",

"description": "Structured organizational initiatives that embed security practices, tooling, and accountability into engineering workflows at scale.",

"sameAs": "https://www.wikidata.org/wiki/Q25263461"

},

{

"@type": "Thing",

"name": "Security Culture in Engineering Organizations",

"description": "The set of shared values, behaviors, and incentives that determine how software engineers perceive and respond to security requirements in their daily work."

},

{

"@type": "Thing",

"name": "SDLC Fragmentation",

"description": "The condition in large engineering organizations where multiple software development lifecycles operate simultaneously, ranging from daily deployments to quarterly release cycles, making uniform security tooling deployment difficult."

},

{

"@type": "Thing",

"name": "Security Ownership",

"description": "The organizational principle that specific teams or individuals are accountable for remediating identified vulnerabilities, as distinct from teams that merely have visibility into them."

},

{

"@type": "Thing",

"name": "Training-of-Trainers Security Model",

"description": "A peer-credibility approach to scaling security education in large organizations by training a small group of respected senior developers who then train a broader group of engineering champions."

},

{

"@type": "Thing",

"name": "Threat Modeling",

"description": "A structured approach to identifying security risks during the design phase of software development, helping engineers understand attack surfaces before code is written.",

"sameAs": "https://en.wikipedia.org/wiki/Threat_model"

},

{

"@type": "Thing",

"name": "Vulnerability Prioritization",

"description": "The practice of ranking security findings by organizational risk impact rather than raw severity score, often applying the Pareto principle to focus remediation effort on the 20% of vulnerabilities that represent 80% of real risk."

},

{

"@type": "Thing",

"name": "Premature Enforcement Anti-Pattern",

"description": "The common mistake of introducing blocking enforcement mechanisms before developers trust security tooling, leading to workarounds, scanner disablement, and damaged relationships between security and engineering teams."

},

{

"@type": "Thing",

"name": "Application Security",

"sameAs": "https://en.wikipedia.org/wiki/Application_security"

},

{

"@type": "Thing",

"name": "DevSecOps",

"sameAs": "https://en.wikipedia.org/wiki/DevOps#DevSecOps"

}

],

"mentions": [

{

"@type": "Thing",

"name": "SAST",

"description": "Static Application Security Testing — automated analysis of source code to identify security vulnerabilities before deployment."

},

{

"@type": "Thing",

"name": "SCA",

"description": "Software Composition Analysis — scanning of open source dependencies and third-party libraries for known vulnerabilities."

},

{

"@type": "Thing",

"name": "Secrets Detection",

"description": "Automated scanning of repositories and pipelines to identify exposed credentials, API keys, and other secrets before they reach production."

},

{

"@type": "Thing",

"name": "Container Scanning",

"description": "Security analysis of container images to identify vulnerabilities in base images, dependencies, and configurations."

},

{

"@type": "Thing",

"name": "OWASP Top 10",

"description": "The Open Worldwide Application Security Project's ranked list of the most critical web application security risks.",

"sameAs": "https://owasp.org/www-project-top-ten/"

},

{

"@type": "Thing",

"name": "CI/CD Pipeline Security",

"description": "Security controls and scanning integrated into continuous integration and continuous deployment pipelines to catch vulnerabilities before code reaches production."

},

{

"@type": "Thing",

"name": "Security Champions Program",

"description": "A distributed model where embedded developers within engineering teams serve as security advocates and points of contact, bridging the gap between central security teams and product engineering groups."

},

{

"@type": "Thing",

"name": "Sprint Backlog Prioritization",

"description": "The process by which engineering teams rank and schedule work within two-week development cycles, where security tasks frequently compete with and lose to feature delivery deadlines."

},

{

"@type": "Thing",

"name": "Pareto Principle in Security",

"description": "The application of the 80/20 rule to vulnerability management, where roughly 20% of identified vulnerabilities typically account for 80% of real organizational risk."

},

{

"@type": "SoftwareApplication",

"name": "Aikido Security",

"description": "A developer security platform designed to surface meaningful risk while minimizing cognitive burden on engineering teams, supporting SAST, SCA, secrets detection, container scanning, and cloud security.",

"url": "https://www.aikido.dev",

"applicationCategory": "SecurityApplication"

}

],

"hasPart": [

{

"@type": "HowTo",

"name": "Four-Phase Developer Security Rollout Model for Enterprise Organizations",

"description": "A proven phased framework for rolling out developer security programs in organizations with 5,000 or more engineers, designed to build trust before enforcement and prioritize cultural adoption over tool deployment.",

"step": [

{

"@type": "HowToStep",

"position": 1,

"name": "Phase 1: Visibility Without Enforcement",

"text": "Deploy security tools across repositories and infrastructure in observation-only mode. Do not block builds or deployments. Surface, categorize, and analyze findings to identify which vulnerabilities appear most frequently, which teams respond quickly, and which types of fixes create the most resistance. The goal at this stage is learning, not control."

},

{

"@type": "HowToStep",

"position": 2,

"name": "Phase 2: Developer Feedback Loops",

"text": "Present findings in ways engineers can act on directly. Aggressively remove false positives, improve remediation documentation, and share concrete fix examples. Introduce intrinsic motivation by framing security as a problem-solving challenge. Some organizations gamify remediation by letting teams compete on issues resolved per sprint. When engineers begin fixing issues voluntarily, the program is gaining genuine traction."

},

{

"@type": "HowToStep",

"position": 3,

"name": "Phase 3: Guardrails and Policy",

"text": "Only after developers trust the tooling should enforcement mechanisms be introduced. These should take the form of guardrails rather than hard gates — blocking critical vulnerabilities in new dependencies, preventing secrets from entering repositories, enforcing minimum patch levels for base images. The emphasis is on preventing new risk, not punishing historical debt. Always pair the enforcement rule with the reasoning behind it."

},

{

"@type": "HowToStep",

"position": 4,

"name": "Phase 4: Executive Accountability",

"text": "Surface security metrics inside engineering leadership dashboards, including time-to-remediation, recurring vulnerability categories, and security backlog trends. When security becomes part of engineering performance discussions rather than isolated security team reports, the cultural shift becomes durable."

}

]

},

{

"@type": "HowTo",

"name": "Training-of-Trainers Model for Security Culture Propagation",

"description": "A peer-credibility approach that scales security knowledge across thousands of engineers without requiring the central security team to train everyone directly.",

"step": [

{

"@type": "HowToStep",

"position": 1,

"name": "Identify master developers",

"text": "Identify 10 senior developers who are widely respected across the engineering organization — not necessarily formal managers or architects, but the people others consult before making architectural decisions."

},

{

"@type": "HowToStep",

"position": 2,

"name": "Train master developers deeply",

"text": "Train this group deeply in secure development practices, threat modeling, and the reasoning behind security requirements, not just the rules themselves."

},

{

"@type": "HowToStep",

"position": 3,

"name": "Expand to 100 engineering champions",

"text": "Have the master developers train 100 engineering champions drawn from teams across the organization, creating a distributed network of security advocates embedded in product engineering."

},

{

"@type": "HowToStep",

"position": 4,

"name": "Propagate to teams at scale",

"text": "Each champion influences their own team of roughly 50 developers. Within weeks, security practices propagate across thousands of engineers through peer credibility rather than central mandates."

}

]

}

]

},

{

"@type": "Person",

"@id": "https://www.aikido.dev/authors/nicholas-thomson#person",

"name": "Nicholas Thomson",

"url": "https://www.aikido.dev/authors/nicholas-thomson",

"jobTitle": "Senior SEO & Growth Lead",

"worksFor": {

"@id": "https://www.aikido.dev#organization"

},

"sameAs": [

"https://www.linkedin.com/",

"https://x.com/"

]

},

{

"@type": "Organization",

"@id": "https://www.aikido.dev#organization",

"name": "Aikido Security",

"description": "Aikido Security is a developer security platform that surfaces meaningful risk while minimizing cognitive burden on engineering teams, combining SAST, SCA, secrets detection, container scanning, and cloud security in a single developer-friendly interface.",

"url": "https://www.aikido.dev",

"logo": {

"@type": "ImageObject",

"url": "https://www.aikido.dev/logo.png"

},

"sameAs": [

"https://www.linkedin.com/company/aikido-security",

"https://twitter.com/aikido_security"

]

}

]

}

</script>