At a glance

- Augmented annual manual pentests with AI-driven white-box testing

- Identified a complex, edge-case vulnerability through context-aware exploration

- Embedded AI pentesting into continuous vulnerability management

- Reduced manual security reporting from days per month to hours

- Enforced security directly in pull request workflows

Challenge

TechWolf builds an AI-driven data layer that helps enterprises understand jobs, tasks, and skills as they navigate workforce transformation. Operating in HR tech means working with sensitive business and personal information. Security is not a feature. It is a prerequisite.

TechWolf has long invested in its security posture. The company maintains ISO 27001 certification, completes yearly SOC 2 audits, runs annual external penetration tests, and uses SAST, SCA, and DAST tooling across its stack.

But security maturity is not static.

“One of our values is ‘Aim for the moon’. For me, that includes raising the security bar year after year,” said Kilian, Security Operations Engineer at TechWolf.

Over time, manual pentests began yielding fewer obvious findings. That was a good sign. Still, the team knew that in a growing and evolving codebase, nuanced edge cases can remain difficult to surface within a fixed-time engagement.

TechWolf wanted to go one step further.

“While we conduct a yearly manual penetration test with an external vendor, we found fewer major issues, and we believed there was more risk hiding deeper in the codebase.”

Adding AI pentesting to extend the security program

TechWolf decided to trial Aikido’s AI pentesting as an extension of its existing program. The setup was straightforward because their environment was already connected inside Aikido.

“I spent less than 15 minutes on the setup. Within a few hours, the agents had already surfaced something interesting.”

Unlike a traditional engagement constrained by time and scope, the AI agents were able to analyze source code context directly, explore edge cases, and generate reproducible attack paths.

“I believe the effectiveness lies in Aikido’s LLM pentests utilizing far more context than a manual pentester ever could, specifically by analyzing the source code.”

For TechWolf, this was not about replacing manual testing. It was about complementing it.

“Both approaches have their place. AI pentesting adds persistence and depth, especially in areas that are harder to reach within a time-boxed engagement.”

What the AI pentest uncovered

During the trial, Aikido’s AI pentest identified a complex vulnerability rooted in application logic.

It was not a superficial misconfiguration or a simple missing check. It required understanding how specific components interacted across different parts of the codebase.

“We were impressed by the depth,” Kilian said. “It wasn’t about finding something basic. It was about exploring parts of the application that are naturally harder to reach.”

The AI agents generated a detailed attack analysis along with a proof-of-concept script, allowing the team to verify and reproduce the issue quickly.

“The PoC scripts were crucial,” Kilian said. “They eliminate doubt, reduce false positives, and make it easier for engineers to understand exactly what needs to be fixed.”

For TechWolf, this validated the decision to expand its testing strategy. Manual pentests remain an important pillar of their program. AI pentesting adds a layer of context-aware exploration that strengthens overall coverage.

Beyond pentesting: consolidating security into one platform

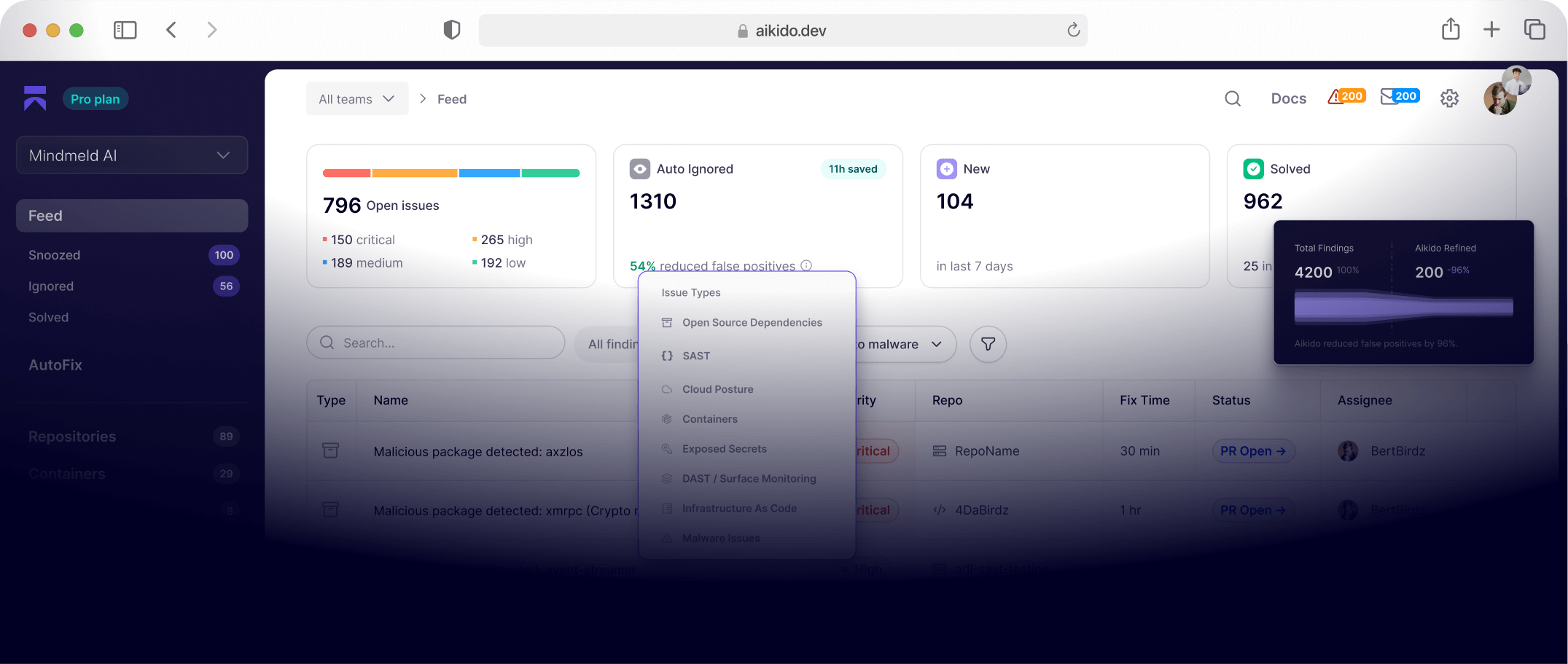

While the AI pentest provided immediate value, the broader impact came from using Aikido as a central vulnerability management platform.

“We already utilize standard security tools like SAST, SCA, DAST, and traditional penetration tests,” Kilian said. “However, these are often isolated, making it difficult to prioritize from various siloed findings”.

Aikido consolidates findings across tools, including pentest results, and automatically prioritizes them through its AutoTriage functionality.

“Aikido’s key value is ensuring we focus our efforts on the most critical issues first through its AutoTriage functionality, while keeping track of all other findings.”

Security is now enforced directly in the development workflow. Pull requests cannot be merged while unresolved vulnerabilities remain, preventing issues from reaching production in the first place.

Results

Over time, the operational improvements became clear.

Before Aikido, Kilian spent significant time consolidating findings from different tools, preparing audit documentation, and manually prioritizing vulnerabilities.

Now, vulnerabilities are automatically prioritized and assigned to the relevant teams.

“The most significant measurable improvement is the reduction in manual security operations time,” Kilian said.

Measurable improvements

.png)

Final verdict

For TechWolf, adopting AI pentesting was not about replacing existing controls or partners. It was about continuing to raise the bar.

“For us, it’s about raising the bar continuously,” Kilian said.

“Aikido helps us stay proactive, prioritize the right issues, and spend less time on operational overhead.”

By combining annual manual pentests with context-aware AI testing and centralized vulnerability management, TechWolf has strengthened an already mature security program; adding depth, visibility, and efficiency without disrupting existing processes.